How to Make Data Centers AI-Ready with MQTT and HiveMQ

Why Legacy Data Center Infrastructure Cannot Meet the Demands of Modern AI Workloads

The data center architectures that served the industry well through two decades of web-scale and enterprise cloud computing share a fundamental characteristic: they were designed for predictable, transactional workloads. Email, e-commerce, and SaaS applications follow growth curves that can be modeled, capacity planned and provisioned in advance. Rack power densities in these environments typically ranged from 3 to 8 kilowatts. Air cooling was sufficient. Monitoring intervals measured in minutes were acceptable because conditions did not change faster than that.

AI has invalidated every one of these assumptions. Large language model (LLM) training runs and generative AI (GenAI) inference introduce rack-level power demands that now reach 50, 80, and in leading-edge deployments 120 kilowatts. Liquid cooling systems - direct-to-chip and full immersion - are no longer optional; they are required. Electrical architectures are migrating from 12-volt to 48-volt designs to reduce energy loss at higher power densities.

Global data center capacity demand is projected to grow from 60 gigawatts in 2023 to 219 gigawatts by 2030 in a midrange scenario, with potential peaks approaching 300 gigawatts. In the United States alone, a 15 gigawatt capacity shortfall is expected even if all currently planned builds are completed. The strategic response from hyperscalers and industrial operators is visible: new data center campuses are being developed in regions chosen for transmission capacity, permitting speed, and energy pricing.

The next wave of AI innovation will not be limited by algorithms or talent, but by infrastructure. Addressing the infrastructure demands of AI requires more than concrete and capital - it demands visibility.

Yet the physical infrastructure challenge, significant as it is, is not the primary constraint. The constraint is operational intelligence: the ability to understand, in real time, what is happening across every subsystem of a facility and to act on that understanding faster than AI workloads change conditions. Most data centers today rely on fragmented monitoring stacks in which HVAC, power, compute and network telemetry are siloed, polled intermittently and locked in proprietary systems.

Liquid cooling infrastructure illustrates the stakes concretely. Flow rates, coolant pressure, fluid temperature and pump health are now mission-critical variables. A sensor anomaly that goes undetected for five minutes in an air-cooled environment might be acceptable. The same anomaly in a liquid-cooled, 120-kilowatt rack can lead to thermal runaway and catastrophic hardware failure. Static monitoring tools and delayed feedback loops do not just reduce efficiency in this environment - they actively increase risk.

How MQTT, Unified Namespace and Event-Driven Architecture Deliver AI-Ready Data Center Infrastructure

The path to AI-ready infrastructure runs through a reframing that is architectural in nature: treating the data center as a data problem, not just a physical infrastructure problem. Every subsystem - EPMS, BMS, PDU, cooling units, compute clusters, networking hardware - generates continuous telemetry that, if properly captured, structured, and routed, becomes the operational intelligence layer that makes adaptive infrastructure possible.

The architectural pattern that enables this is the Unified Namespace (UNS). Rather than building and maintaining point-to-point integrations between systems, each subsystem publishes data to a centralized, structured namespace. Every downstream consumer - NOC dashboards, predictive maintenance systems, billing engines, capacity planning tools, AI/ML pipelines - subscribes to the data it needs. The namespace acts as the connective tissue of the intelligent data center.

In an Event-Driven Architecture (EDA), data flows at the speed of change rather than on a polling schedule. A thermal anomaly detected by a coolant flow sensor propagates to every subscribed system in milliseconds. A power spike from a GPU training cluster reaches the capacity planning system before the next scheduled polling interval would have captured it. Adaptive cooling systems respond to real-time heat maps rather than fixed setpoints. The infrastructure moves from reactive to predictive.

Semantic context is the layer that makes telemetry actionable for AI systems. Raw sensor values without context - without the structure that explains what they represent, where they come from and how they relate to other data - are not meaningful to an AI model or analytics pipeline. Organizing telemetry through a structured MQTT topic hierarchy, enriched by HiveMQ Pulse with entity relationships and data catalog governance, transforms raw signals into information that AI/ML systems can reason about without per-source transformation work.

How HiveMQ's Three-Layer Platform Powers AI-Native Data Center Operations at Enterprise Scale

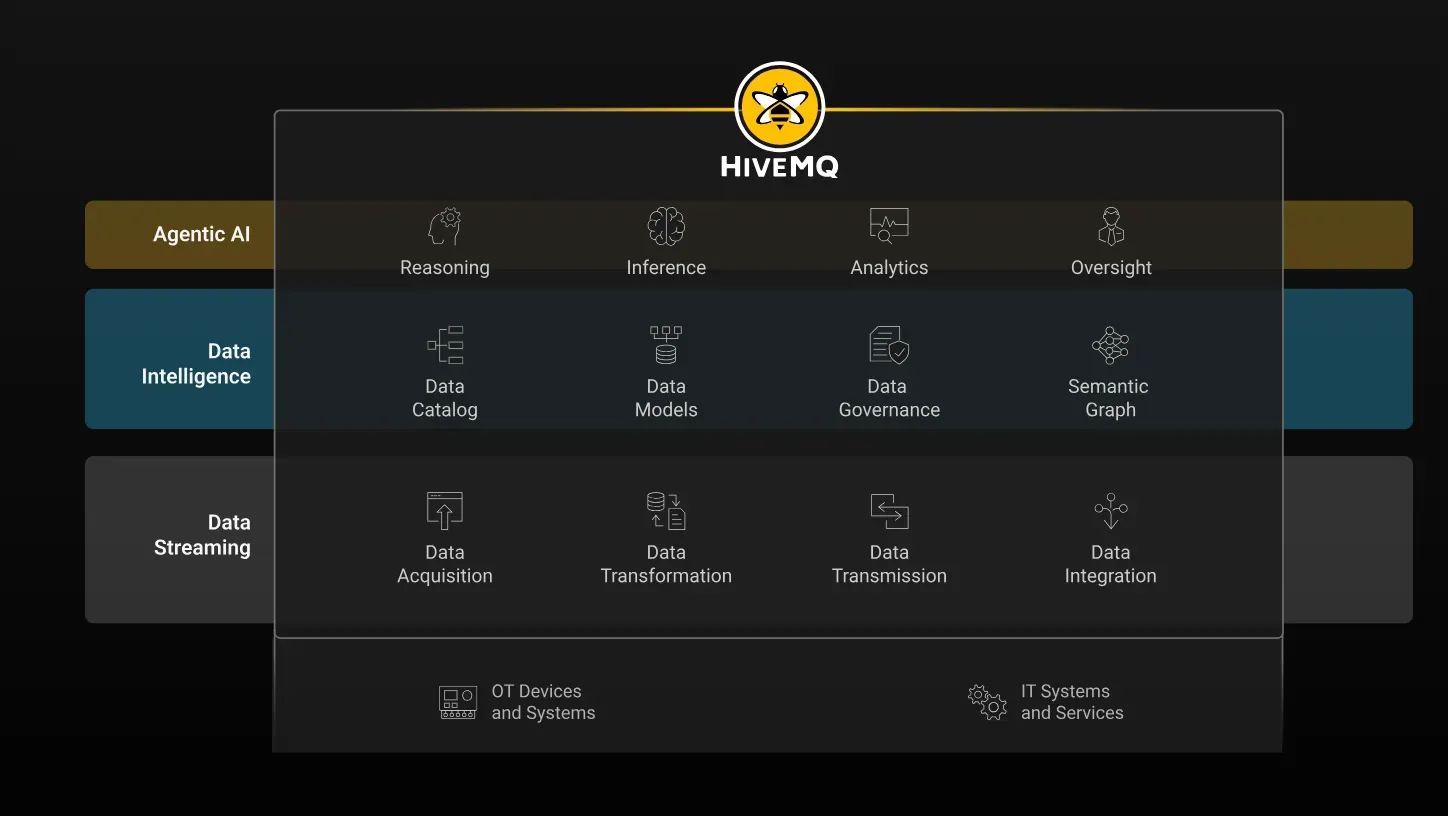

The HiveMQ Platform follows the natural progression data centers take toward AI-native operations: connect and stream all operational data reliably (Data Streaming), make it understandable and trustworthy (Data Intelligence), then enable safe, governed action (Agentic AI for Operations).

HiveMQ provides the enterprise-grade data streaming backbone that operationalizes the UNS/EDA architecture from edge to cloud at the scale and reliability that AI data center operations require. The platform follows three layers that reflect the natural progression a data center takes on its journey to AI-native operations.

The first layer is Data Streaming. HiveMQ Broker provides the MQTT-based publish-subscribe infrastructure that connects every subsystem to the unified namespace. The broker's distributed clustering architecture handles millions of simultaneous connections and processes millions of messages per second, providing the throughput required for high-density AI facility telemetry. High availability configuration ensures operational continuity through maintenance events and hardware failures. HiveMQ Edge translates legacy OT protocols - OPC UA, Modbus, BACnet - into MQTT at the point of collection, enabling older infrastructure to participate in the unified data fabric without hardware replacement. The MQTT topic hierarchy is structured and organized into a UNS from the moment data enters the platform.

The first layer is Data Streaming. HiveMQ Broker provides the MQTT-based publish-subscribe infrastructure that connects every subsystem to the unified namespace. The broker's distributed clustering architecture handles millions of simultaneous connections and processes millions of messages per second, providing the throughput required for high-density AI facility telemetry. High availability configuration ensures operational continuity through maintenance events and hardware failures. HiveMQ Edge translates legacy OT protocols - OPC UA, Modbus, BACnet - into MQTT at the point of collection, enabling older infrastructure to participate in the unified data fabric without hardware replacement. The MQTT topic hierarchy is structured and organized into a UNS from the moment data enters the platform.

The second layer is Data Intelligence, delivered through HiveMQ Pulse. Where the streaming layer moves data reliably, the intelligence layer makes it understandable and trustworthy. HiveMQ Pulse adds a semantic graph: discovery capabilities that expose what data exists, a data catalog that governs how it is organized and classified, and contextualization that establishes relationships between entities. For AI data center operations, cooling telemetry is not just a stream of numbers - it becomes a structured representation of how specific cooling assets relate to specific racks, workloads and power domains. AI/ML pipelines receive data with the context required to produce reliable outputs. HiveMQ Data Hub enforces schema and access governance in-stream at ingestion, ensuring that only trusted, validated data flows downstream.This reduces downstream transformation effort and simplifies troubleshooting across systems.

The third layer is Agentic AI for Operations. As data centers evolve toward AI-native operations, the ability to take safe, governed action on operational data becomes as important as the ability to monitor it. HiveMQ's agentic capabilities are built for environments where mistakes have operational and safety consequences: industrial-grade agent orchestration, governed connectivity and trusted execution. The domain expert describes the goal - reduce cooling energy consumption in this zone, investigate this anomaly, prepare the shift handover report - and the agent acts on operational data with appropriate guardrails. AI is not the end goal here; trusted delegation is.

Organizations that treat infrastructure as a data problem will be the ones best positioned to scale AI, unlock efficiency, and future-proof their operations.

For data centers pursuing AI-native operations, HiveMQ connects with the analytics and AI/ML ecosystem through enterprise integrations with Apache Kafka, Snowflake, Databricks and cloud platforms including AWS, Azure, and Google Cloud. The structured, context-rich telemetry streaming through HiveMQ flows directly into analytics and AI pipelines that produce predictive insights - without requiring per-source ETL work or data replication.These integrations remain decoupled from ingestion, allowing systems to be connected incrementally without impacting the core data flow.

Data Center Infrastructure Is a Data Problem: The Path to AI Leadership

AI-readiness is not a procurement decision. It cannot be achieved by upgrading chips, increasing rack density or signing a nuclear power agreement alone, as important as each of those steps may be. AI-readiness is a data architecture decision: the decision to treat every subsystem as a real-time data source, to organize that data through a Unified Namespace, to make it semantically understandable through an intelligence layer, and to enable safe, governed action through an agentic layer. In practice, this translates to faster site onboarding, consistent visibility across systems and faster resolution of operational issues.

The data centers that will lead in the AI era are those that make this transition now, before the operational complexity of AI workloads overwhelms the capacity of static, siloed monitoring approaches. The operators who treat infrastructure as a data problem will have measurable improvements in cost, efficiency and uptime that compounds over time - because every deployment, every new data source and every new analytics capability adds to the operational intelligence of the platform rather than adding to its integration debt.

HiveMQ is purpose-built for this challenge. From edge protocol translation to enterprise MQTT streaming to semantic intelligence and agentic operations, the platform provides the data backbone that transforms a data center from a compute container into an intelligent, adaptive infrastructure - one that does not just run AI workloads, but understands them, adapts to them and optimizes around them in real time.

Learn more and contact us if you are on the journey of making your data center AI-ready.

HiveMQ Team

Team HiveMQ brings together deep expertise in MQTT, Industrial AI, IoT data streaming, UNS, and Industrial IoT protocols. Follow us for practical deployment guidance, best practices for building a secure, reliable data backbone, and insights into how we are shaping the future of connected industries.

Our mission is to transform industrial data into real-time intelligence, actionable insights, and measurable business outcomes.

Have questions or need support? Contact us. Our experts are ready to help.