Data Center Monitoring and Optimization with Agentic AI

The digital twin provides the foundation for understanding the operating environment. It reveals what is happening across the facility, and it helps anticipate what may happen next. Agentic AI extends that capability into a more consequential domain: deciding what action should be taken, and executing that action autonomously where appropriate.

In the third post of the ‘Optimizing Data Center Operations with Digital Twins and Agentic AI’ series, we explore why, in AI-intensive data centers, this shift is becoming increasingly important.

Power systems, cooling infrastructure, workload placement and facility operations no longer function as separate domains that can be managed independently. They operate as a single, tightly coupled system in which changes in one area can rapidly affect performance, capacity, efficiency, and risk in another. The number of variables involved, and the speed at which conditions can change, make continuous optimization difficult to sustain through human oversight alone.

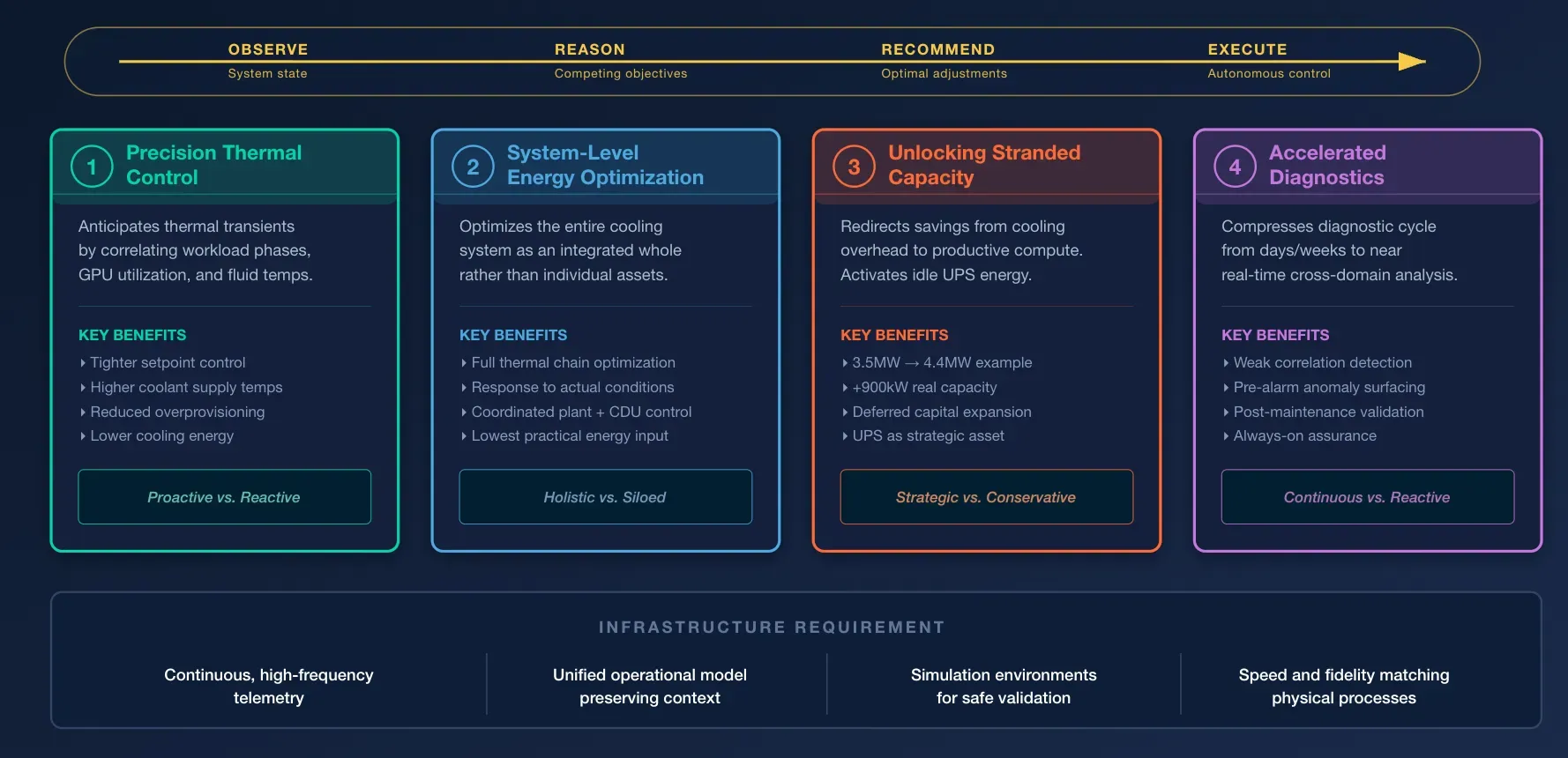

Agentic AI addresses this challenge by introducing autonomous, goal-directed software agents that operate on top of the digital twin and within defined operational guardrails. These agents do more than monitor conditions or generate alerts. They evaluate system state, reason across competing objectives, and recommend or execute adjustments in real time. In effect, they extend the capacity of the operations team by providing continuous, machine-speed decision support and, where authorized, autonomous control across the facility.

Precision Thermal Control

As discussed in the first blog in this series, the shift to liquid cooling introduces a class of control challenges that conventional automation is not well suited to manage. In a high-density GPU environment, thermal conditions can change rapidly and in highly coordinated ways. Hundreds of GPUs may move through computation, communication, and idle phases in near synchrony. As those workload phases shift, IT load can change abruptly, creating sharp thermal transients in the coolant distribution units serving those racks.

Conventional control strategies typically manage this uncertainty by operating conservatively. Coolant supply temperatures are held lower than steady-state conditions would require in order to protect against transient peaks and avoid GPU throttling. While effective as a safety measure, this approach comes at a cost. Cooling energy consumption increases, thermal efficiency declines, and usable IT capacity is constrained because excess margin is maintained at all times rather than applied selectively when conditions demand it.

Agentic AI enables a more precise approach. Operating on top of the digital twin, an AI agent can learn the patterns that precede thermal excursions by correlating workload scheduling signals, GPU utilization trends, fluid temperatures, and upstream system behavior. Rather than waiting for a temperature threshold to be crossed, the agent can anticipate the transient and adjust CDU setpoints, flow behavior, or related cooling parameters in advance. This allows the system to absorb rapid load changes with far greater stability and precision.

The operational benefit is twofold. Thermal control can be maintained much closer to the desired setpoint, reducing the magnitude of transient spikes and lowering the need for broad cooling overprovisioning. At the same time, the facility can operate at higher coolant supply temperatures while remaining within safe hardware limits. The result is a more efficient use of cooling infrastructure, lower energy consumption, and greater availability of power for productive compute capacity rather than thermal overhead.

System-Level Energy Optimization

Cooling infrastructure typically represents the largest share of non-IT energy consumption in the data center. Conventional control strategies are designed first and foremost for stability and reliability, which often leads to inherently conservative operation: fixed chiller staging thresholds, broad safety margins on pressure differentials, and static temperature schedules maintained across changing conditions. These approaches are effective at avoiding incidents, but they also leave significant efficiency gains unrealized under normal operating states.

Agentic AI makes it possible to optimize the cooling system as an integrated whole rather than as a collection of independently controlled assets. Operating across building management, plant controls, and supervisory systems, an AI agent can continuously evaluate the state of the full thermal chain, from central plant equipment through distribution and rack-level cooling, and determine the operating point that satisfies reliability constraints with the lowest practical energy input. Instead of relying on fixed assumptions, the agent responds to actual conditions in real time, adjusting control variables as load profiles, ambient conditions, and equipment performance change.

When this capability is combined with the precision CDU control described above, the benefits extend across the full cooling stack. Localized thermal stability at the rack level reduces the need for broad upstream conservatism, while plant-level optimization ensures that chillers, pumps, and supporting systems operate more efficiently in response to actual demand. The result is not simply lower cooling energy consumption in isolation, but a coordinated improvement in total thermal system performance, delivering measurable efficiency gains while maintaining the reliability and service levels required by the facility.

How to Unlock Stranded IT Capacity

How to Unlock Stranded IT Capacity

The gains created through precision thermal control do more than improve efficiency; they expand the amount of IT capacity that can be used safely within the existing facility envelope. Every watt not consumed by avoidable cooling overhead is a watt that can be redirected to productive compute. In the 5-megawatt data hall, where thermal uncertainty may previously have limited deployments to 3.5 megawatts, the combination of digital twin visibility and agentic AI control can increase confidence in the facility’s true operating boundary and allow that ceiling to move materially higher. If the hall can safely support 4.4 megawatts under current conditions, that additional 900 kilowatts of capacity is not an abstract technical gain. It translates into more available compute capacity, higher throughput, and deferred capital expenditure.

This is where optimization becomes strategically significant. The value lies not only in consuming less energy, but in extracting more productive output from infrastructure that has already been built, contracted, and paid for. Capacity that would otherwise remain trapped behind conservative margins can be brought into service with a higher degree of confidence because the operational limits are continuously understood rather than statically assumed.

A similar principle applies to energy stored within backup power systems. UPS battery capacity has traditionally been reserved almost exclusively for resilience, remaining idle except during outages or routine testing. With the appropriate controls, policies and operational safeguards in place, agentic AI can identify limited windows in which stored energy may be used strategically to reduce peak grid demand or smooth short-term load fluctuations without compromising resilience requirements. In that model, backup power is no longer treated solely as a passive insurance asset. It becomes part of a broader optimization strategy that improves how the facility uses the electrical capacity already available to it.

How Does Agentic AI Accelerate Diagnostics?

In tightly coupled facilities, diagnosing operational anomalies can be slow and difficult. The root cause of an intermittent thermal excursion or efficiency loss may span multiple subsystems, unfold over time, and present only weak signals in any one domain. Human teams often have deep expertise within individual systems, but rarely have the continuous, cross-domain visibility needed to identify these patterns quickly. As a result, diagnosis can take days or even weeks, during which performance degrades, risk accumulates, and corrective action is delayed.

Agentic AI changes this by compressing the diagnostic cycle from extended investigation to near-real-time analysis. Operating continuously across the full telemetry environment, diagnostic agents can detect weak correlations, gradual deviations, and short-lived events that would be easy to miss through manual review alone. Because they reason across the broader operational context, they are better positioned to distinguish between isolated anomalies and symptoms of a deeper system-level issue.

Consider the chiller maintenance scenario introduced in the second blog in this series, How The Digital Twin Becomes the Foundation for Intelligent Data Center Operations. Once the chiller returns to service, a diagnostic agent can immediately compare its live operating behavior against historical baseline performance and expected post-maintenance conditions. If the asset exhibits subtle deviations, slightly elevated vibration, reduced heat transfer efficiency, abnormal pressure behavior, or slower thermal response, those changes can be identified early, even if they are not yet severe enough to trigger conventional alarms. What might otherwise remain unnoticed for weeks can be surfaced within minutes, allowing operators to intervene before the issue compounds into reduced cooling performance, higher energy consumption, or a future failure.

The result is not simply faster troubleshooting, but a more continuous and proactive form of operational assurance. Diagnostics become an always-on capability rather than a reactive process initiated only after a visible problem emerges.

The Infrastructure Question

The capabilities described in this post require the right underlying data foundations to be in place. They require continuous, high-frequency telemetry across critical subsystems, a unified operational model that preserves context across domains, and simulation environments in which agents can be trained and validated safely before being introduced into live operations. Just as importantly, all of this must function at a speed and level of fidelity that matches the physical processes being monitored and controlled.

This is where the real constraint emerges. Most existing data center infrastructure was not designed for this operating model. It was built for an era in which periodic reporting, isolated control systems, and human interpretation of dashboards were considered sufficient. In that environment, telemetry was collected primarily to inform operators, not to support machine-speed reasoning, closed-loop optimization, or autonomous intervention.

As a result, the limiting factor is often not the promise of digital twins or agentic AI, but the readiness of the underlying data architecture. If telemetry is incomplete, delayed, inconsistent, or fragmented across proprietary systems, then the twin cannot maintain an accurate view of the facility, and agents cannot act with the confidence or precision required for production use. The infrastructure gap is therefore not a secondary implementation challenge. It is the central barrier between conceptual potential and operational reality.

Are you ready to explore intelligent data center operations?

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.