How The Digital Twin Becomes the Foundation for Intelligent Data Center Operations

Power constraints, thermal limits and AI workload demand are often treated as separate operational issues. In reality, they are deeply interconnected, and that interdependence is exactly what makes them difficult to manage through conventional, system-by-system approaches.

The relationship between power and thermal capacity illustrates the problem clearly. A facility may reach its thermal limit well before it exhausts its contracted electrical capacity. When that happens, a portion of available power becomes effectively stranded. Although the operator cannot use the full allocation because cooling capacity has become the bottleneck, the grid must still reserve the entire contracted load. That capacity remains locked to the site rather than being redeployed elsewhere, even though the facility itself cannot fully utilize it.

This is the operational consequence of fragmented visibility: each subsystem may appear manageable in isolation, while the facility as a whole operates below its true potential.

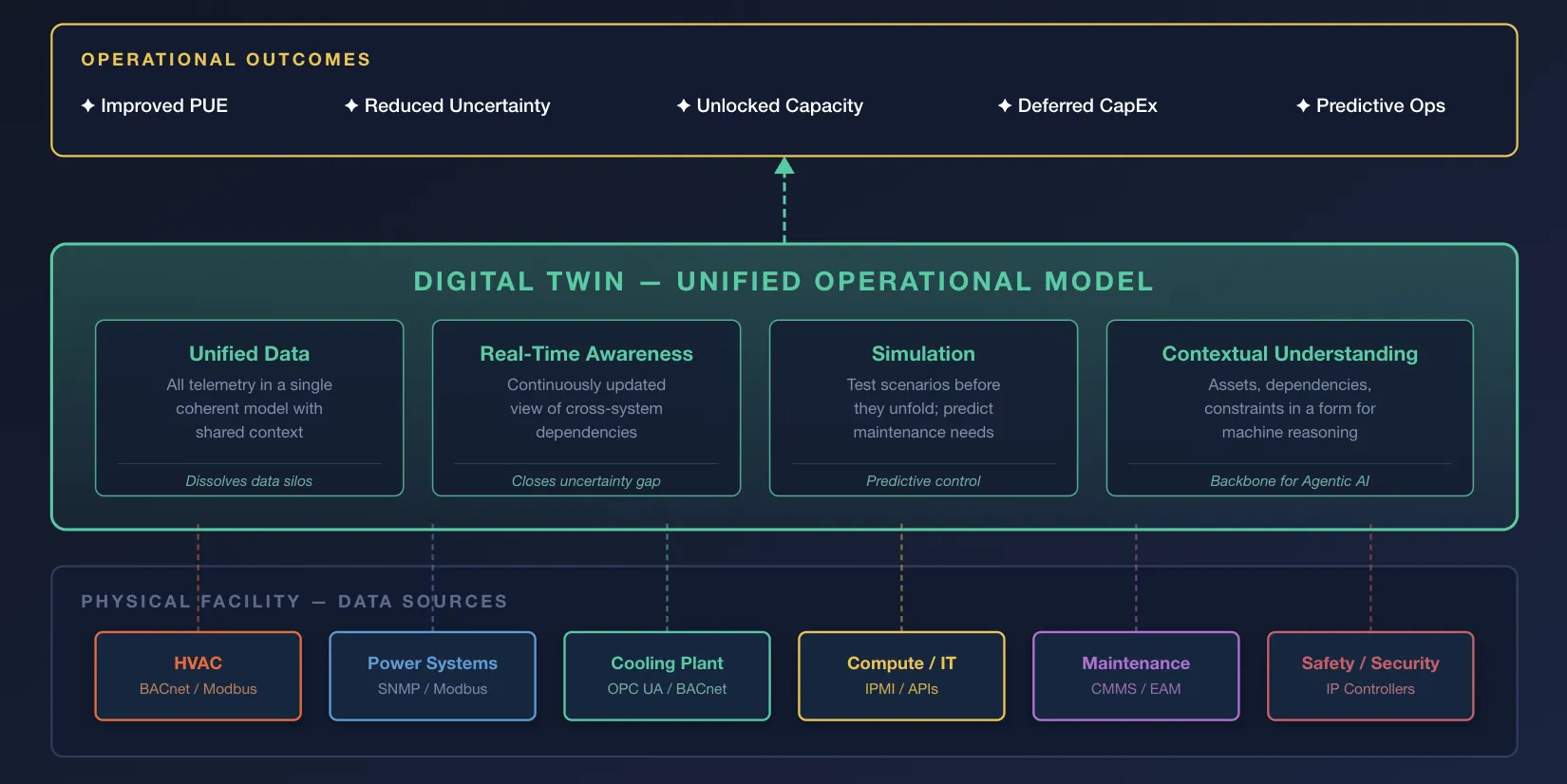

In the second blog of this series exploring the data maturity path to intelligence-driven data center operations, we explore how a digital twin addresses this - by providing a continuously updated, data-driven virtual model of the physical facility and its critical subsystems. By unifying telemetry, asset states, and system relationships into a single operational model, the digital twin gives operators the context needed to understand how constraints in one domain affect performance in another, and to act on those interdependencies in real time.

How Do Digital Twins Close the Uncertainty Gap?

Every facility has a theoretical operating envelope: contracted power, designed cooling capacity, and rated rack density. In practice, however, operators rarely run close to those limits. The issue is not always that the infrastructure cannot support higher utilization, but that operators lack the confidence to determine exactly where the real operational boundaries lie at any given moment. Physical systems do not behave in perfectly linear ways. Loads are unevenly distributed, thermal conditions shift with ambient weather and workload placement, and equipment performance changes over time. In the absence of precise, real-time insight into these interactions, the rational response is to preserve wide operating margins.

By continuously ingesting telemetry from across the facility and modeling the relationships between power, cooling, workload distribution, and equipment state, a digital twin provides a live operational picture of where true capacity boundaries exist at any given moment. Historical behavior can be combined with current conditions to create a dynamic and far more accurate profile of facility performance. The result is not a reduction in safety, but a reduction in uncertainty. Operating margins can be narrowed with confidence because they are based on measured conditions rather than conservative assumption.

Why Rethink Efficiency Beyond PUE?

Why Rethink Efficiency Beyond PUE?

Power Usage Effectiveness (PUE) remains a useful metric, but it captures only one dimension of operational performance. On its own, it cannot represent the full economic, capacity or sustainability implications of how a facility is run. An exclusive focus on improving PUE can therefore produce outcomes that appear efficient in isolation while undermining broader operational objectives.

An operator may implement cooling changes that reduce PUE from, say, 1.5 to 1.35. On paper, this appears to be a clear efficiency gain. But if those changes also constrain workload placement or reduce the hall’s usable capacity, the result may be counterproductive. From both a business and environmental perspective, it can be far more valuable to operate at a slightly higher PUE while fully utilizing existing infrastructure than to achieve a lower ratio in a partially utilized facility that still requires additional capacity to be built or leased elsewhere. In that scenario, the apparent efficiency improvement masks a larger failure in system-level optimization.

A digital twin makes these trade-offs visible. By modeling the interactions between cooling performance, power availability, workload distribution, utilization and asset constraints, it enables operators to evaluate efficiency in the context of total system value. Decisions can then be made against a broader set of objectives: not only reducing energy overhead, but also maximizing productive capacity, deferring capital expenditure, and minimizing total carbon impact across the operating estate.

This shifts the question from how to optimize an isolated metric to how to optimize the facility as an integrated system. The goal is no longer the lowest PUE at any cost, but the highest overall operational value within defined reliability, cost, and sustainability boundaries.

How Do Digital Twins Dissolve Data Silos?

The value of a digital twin depends on the range and quality of the data it brings together. In most facilities, however, the systems responsible for critical operations remain fragmented. Building management systems govern HVAC, power monitoring platforms track electrical performance, maintenance systems manage work orders and asset service history, and IT platforms monitor compute and network behavior. Each operates with its own telemetry, data model and interface.

The consequence is not simply inconvenience, but loss of operational context. A signal viewed in isolation rarely carries enough meaning to support confident action. If a chiller is reported as offline by the building management system, that status alone does not explain whether the event reflects an unexpected failure, a controlled shutdown, or a scheduled maintenance activity. Without integration with the maintenance system, the signal remains incomplete, and any response based on it, whether manual or automated, risks being delayed, unnecessary or wrong.

A digital twin resolves this problem by bringing these isolated data sources into a unified operational model. Telemetry is no longer interpreted as a set of disconnected events, but as part of a broader system state enriched with asset condition, maintenance status, dependency relationships and operational impact. In that model, an offline chiller is understood not merely as unavailable, but as unavailable for a defined reason, for a known duration and with measurable implications for cooling capacity in a specific part of the facility.

This is the shift from data aggregation to operational intelligence.

The twin does not simply collect signals from multiple systems; it gives those signals shared meaning. That shared meaning is what allows operators to understand conditions faster, assess consequences more accurately, and respond with far greater precision.

Simulation: From Reactive Response to Predictive Control

Real-time visibility is an important step forward, but the most transformative capability of a digital twin is simulation. Because the twin models the relationships between subsystems rather than simply displaying their current status, it allows operators to evaluate scenarios before they unfold and assess interventions before they are made.

For example, before deploying a new high-density AI training cluster into available capacity, the operator can simulate the thermal and electrical effects of that deployment under a range of conditions: elevated summer temperatures, reduced cooling availability during planned maintenance, or a sudden increase in inference demand on adjacent racks. The purpose is not merely to observe current conditions, but to test how the facility is likely to behave under stress. If the proposed deployment would push any subsystem beyond safe operating limits, the risk can be identified in advance and mitigation options can be evaluated before hardware is installed or workloads are assigned.

This same capability shifts maintenance from schedule-based intervention to condition-based prediction. By projecting current degradation patterns into future operating states, the digital twin can identify when a coolant pump, valve, heat exchanger or UPS module is likely to require attention based on its actual performance in its specific operating environment. Simulated expected behavior can be compared continuously against measured actual performance, allowing anomalies to be detected at an early stage, well before they develop into faults or service interruptions.

The result is a fundamental change in operating posture. Instead of reacting to events after they affect the facility, operators can test, anticipate and intervene before risks materialize. That is the transition from reactive response to predictive control.

The Digital Twin as The Backbone for Agentic AI

Everything the digital twin provides, unified data, real-time operational awareness, contextual understanding and simulation, serves two purposes. It improves how facilities are managed today, and it establishes the foundation for a more autonomous operating model in the future.

Agentic AI cannot operate effectively on top of raw sensor streams, fragmented telemetry or disconnected dashboards alone. It requires a structured, continuously updated representation of the environment in which decisions will be made and actions will be taken.

This is what the digital twin provides. It turns the facility into an intelligible system: one in which assets, dependencies, constraints, and operating conditions are represented in a form that can support machine reasoning as well as human understanding. Instead of forcing an AI system to infer operational reality from isolated signals, the twin provides a coherent model of that reality.

Simulation is especially critical in this progression. In a live data center, the cost of allowing an AI system to learn through trial and error is unacceptable. The risks to uptime, equipment, and safety are simply too high. A digital twin creates a safe, high-fidelity environment in which agents can be trained, tested and validated across a wide range of operating scenarios before they are introduced into production. That includes not only common operating conditions, but also rare or extreme events that may never have been observed in the physical facility, yet still need to be anticipated.

The value of this synthetic environment is that it is not limited to replaying the past. It can be used to model conditions the facility has not yet encountered, allowing agents to develop and be evaluated against future states rather than historical events alone. As a result, agents deployed on top of a well-instrumented digital twin do not begin as blind systems reacting to live telemetry. They begin with operational context, validated behaviors, and a safer path to working alongside human teams.

Are you ready to shift from data aggregation to operational intelligence?

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.