Digital Twins and Agentic AI: A Data Maturity Path to Intelligence-Driven Operations

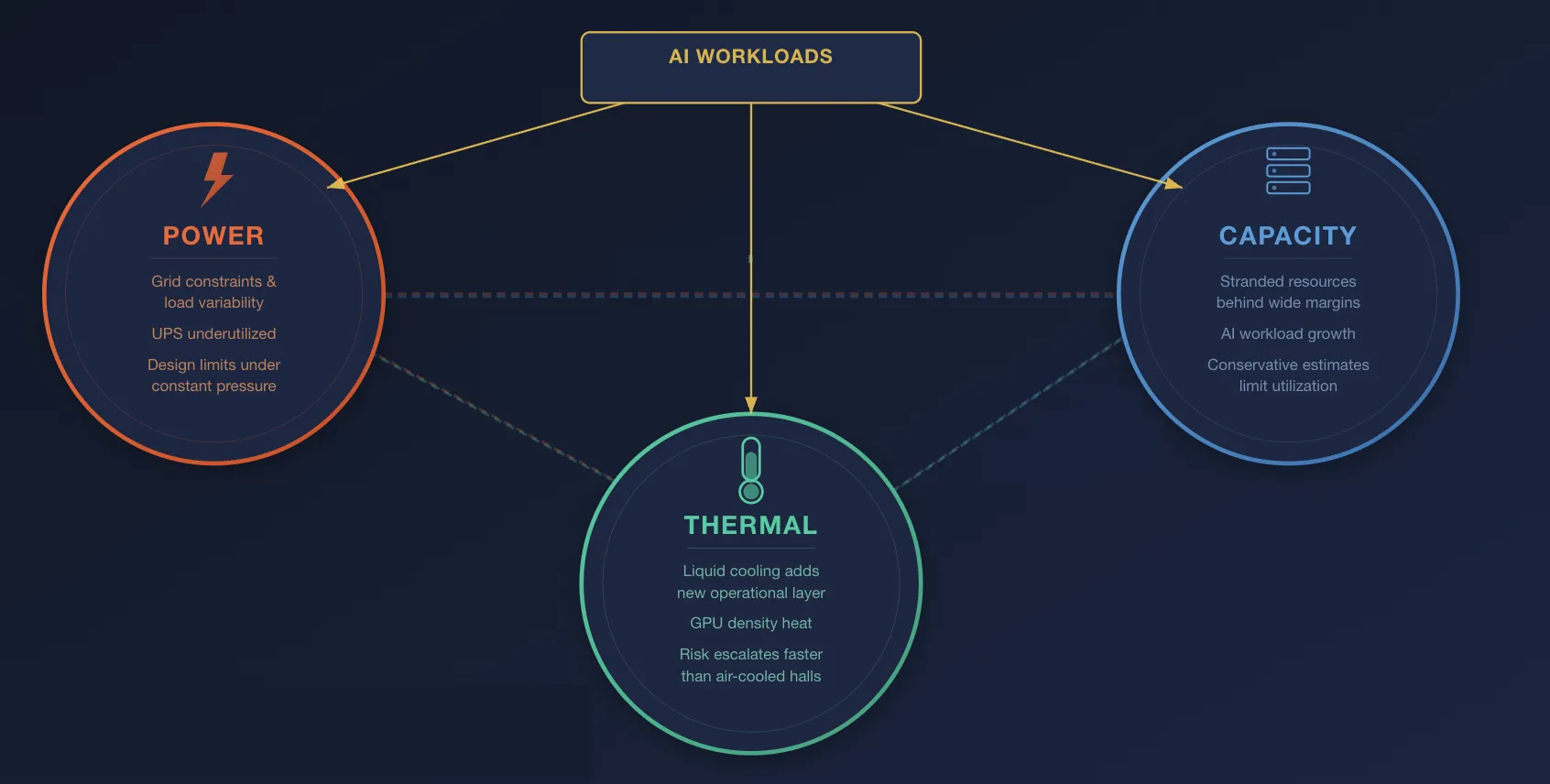

Data centers have long operated under competing operational constraints: ensuring reliable power delivery while controlling costs, maintaining thermal stability across increasingly dense infrastructure and maximizing capacity utilization, given that capacity is both capital-intensive to build and costly to leave underutilized.

While these challenges are not new, the conditions under which they must now be managed have changed materially. The rapid growth of AI workloads is increasing both the speed and severity of operational stress across the data center environment. Issues that could previously be addressed through conservative provisioning and manual oversight are becoming more difficult to manage within acceptable economic and operational limits.

As a result, the three most persistent operational pressures in the modern data center, power availability, thermal management and capacity utilization, can no longer be treated as distinct concerns. They are structurally interconnected, with constraints in one domain increasingly shaping outcomes in the others. The expansion of AI infrastructure is intensifying this interdependence and narrowing the margin for error.

This blog series explores digital twins and agentic AI, working in concert, as a practical solution for addressing these operational pressures and transforming how data centers are monitored, optimized, and operated.

A digital twin provides a continuously updated virtual representation of the physical environment, enabling operators to understand cross-system dependencies that are often obscured by siloed management tools. It also enables scenario simulation before operational change, helping reduce uncertainty and revealing usable capacity that might otherwise remain stranded.

Agentic AI extends this capability by enabling workflows and autonomous or semi-autonomous agents that can optimize cooling, power distribution, and workload placement in near real time. Together, these technologies can improve energy efficiency, thermal resilience, usable capacity, and the speed and quality of operational diagnosis.

Neither digital twins nor agentic AI can deliver on their promise on top of the fragmented data architectures that characterize many data centers today. Point-to-point integrations have produced environments in which data remains siloed across proprietary systems, is often collected through slow and inefficient polling mechanisms, and lacks the shared operational context required for higher-order reasoning, simulation, and coordinated automation.

To address this foundational gap, the series introduces a three-layer data maturity model.

Layer 1, Data Streaming - establishes a real-time, event-driven data foundation built on MQTT and a Unified Namespace, replacing brittle point-to-point integrations with a scalable and decoupled architecture.

Layer 2, Data Intelligence - enriches raw telemetry with semantic metadata, ontologies and knowledge graphs, creating data that is contextually coherent, physically grounded, and suitable for simulation and AI-driven decision support.

Layer 3, Agentic Industrial Operations - provides the execution framework, policy boundaries, and audit mechanisms required for autonomous systems to operate safely and accountably in mission-critical environments.

What Is The New Operational Reality of the Modern Data Center?

In the first post of the series, we explore the key challenges faced by data center operations and conversely the opportunities presented by data-driven optimization. Firstly, we need to address the three core operational challenges faced by data center operators:

1. Power Infrastructure Under Pressure

Power infrastructure, from grid interconnection through UPS and rack-level distribution, now sits at the heart of the data center operating challenge. As AI workloads increase rack density and introduce sharper load variability, operators must continually assess whether existing electrical systems can support higher demand without breaching design and reliability limits.

At the same time, on-site battery capacity remains underutilized in many facilities. UPS systems were historically deployed for backup, not active energy optimization. Today, operators are increasingly exploring how these assets can support peak shaving and other behind-the-meter flexibility use cases, but adoption is still constrained by reliability requirements, battery lifecycle concerns, controls integration and site-specific operating policies.

2. Thermal Management at a Tipping Point

The thermal demands of high-density GPU infrastructure are pushing many data centers beyond the practical limits of traditional air-cooled designs. In response, operators are increasingly adopting direct-to-chip liquid cooling, while immersion remains a more selective option for specific deployments. This is not a simple cooling retrofit. It introduces a new operational layer in which coolant flow, supply and return temperatures, pump performance, leak detection and pressure stability become critical to system reliability.

In these environments, thermal risk can escalate far faster than in conventional air-cooled halls. A sensor, pump or control failure in the cooling loop can quickly compromise hardware performance, accelerate degradation or trigger protective shutdowns. The monitoring approaches that were sufficient for legacy air-cooled environments are no longer enough. Precision, redundancy and real-time response are becoming essential to maintaining reliable operations.

3. Lack of Unified, Real-Time Visibility

Perhaps the most foundational challenge is the lack of unified, real-time visibility across critical subsystems. Many facilities still operate with fragmented monitoring stacks, where HVAC telemetry, power distribution data, compute performance, and network behavior are collected through separate tools, updated at different intervals, and often constrained by limited interoperability. The result is operational awareness that is descriptive, but not sufficiently coordinated for fast decision-making.

In AI-intensive environments, this gap becomes more consequential. Long-running training workloads, high-density compute, and volatile inference demand place constant pressure on power, cooling, and capacity simultaneously. Static monitoring and delayed feedback loops do not just reduce efficiency; they increase operational risk and make it harder to allocate resources accurately. At the same time, the wider AI market has already shown how easy it is to underestimate costs, with analysts warning that AI-related spending can be misjudged when usage patterns, pricing, and infrastructure requirements are poorly understood [according to IDC].

What Is The Opportunity for Data-Driven Optimization?

What Is The Opportunity for Data-Driven Optimization?

The same operational complexity that introduces risk also creates a powerful opportunity for operators able to turn data into coordinated action.

Instead of relying on broad safety margins and static operating assumptions, data-driven optimization enables facilities to respond dynamically to actual conditions across power, cooling and compute environments. Cooling strategies can be continuously adjusted based on live thermal loads, reducing unnecessary energy consumption while improving Power Usage Effectiveness (PUE). Equipment performance can be monitored in context, allowing early detection of declining pump efficiency, thermal imbalance or abnormal power behavior before these issues develop into failures.

At the same time, unified operational visibility allows operators to extract more value from existing infrastructure. Capacity can be allocated with greater precision, utilization can be increased without compromising resilience, and expansion decisions can be based on real constraints rather than conservative estimates. What emerges is not simply better monitoring, but a more adaptive operating model, one that improves efficiency, strengthens reliability, extends asset life, and creates a more credible foundation for carbon and energy reporting as regulatory expectations continue to rise.

Why Digital Twins and Agentic AI?

These challenges share a common cause: data center operations have become too complex, too dynamic, and too tightly interconnected to be managed optimally through human oversight and conventional monitoring tools alone. The volume of telemetry now generated across power, cooling, compute and network systems exceeds what operations teams can continuously interpret in real time. More importantly, the dependencies between these systems mean that no variable can be managed in isolation. A cooling adjustment affects power demand, power availability influences capacity headroom, and capacity constraints shape workload placement and service performance. Point solutions can monitor individual domains, but they cannot reason across the full operational system.

A digital twin provides the foundational layer for this shift. By creating a continuously updated virtual representation of the physical environment, it turns fragmented telemetry into a unified operational model of the data center.

Agentic AI builds on that model to move operations beyond observation and alerts toward coordinated, goal-directed action. Within defined policies and governance boundaries, it can evaluate conditions, recommend or execute adjustments, and continuously optimize across competing objectives such as efficiency, resilience, utilization, and performance.

Together, digital twins and agentic AI provide the architectural basis for a more adaptive operating model. The rest of this blog series examines how each capability contributes to that transformation.

Ready to find out more about becoming an intelligent data center?

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.