Why Traditional Data Center Infrastructure Falls Short

The primary barrier to intelligent data center operations lies deeper in the underlying data infrastructure: how telemetry is collected, transmitted, integrated, and exposed across the facility.

In the fourth blog post of this series, we examine how data center data architectures evolved, and why the resulting model is poorly suited to real-time, intelligence-driven operations.

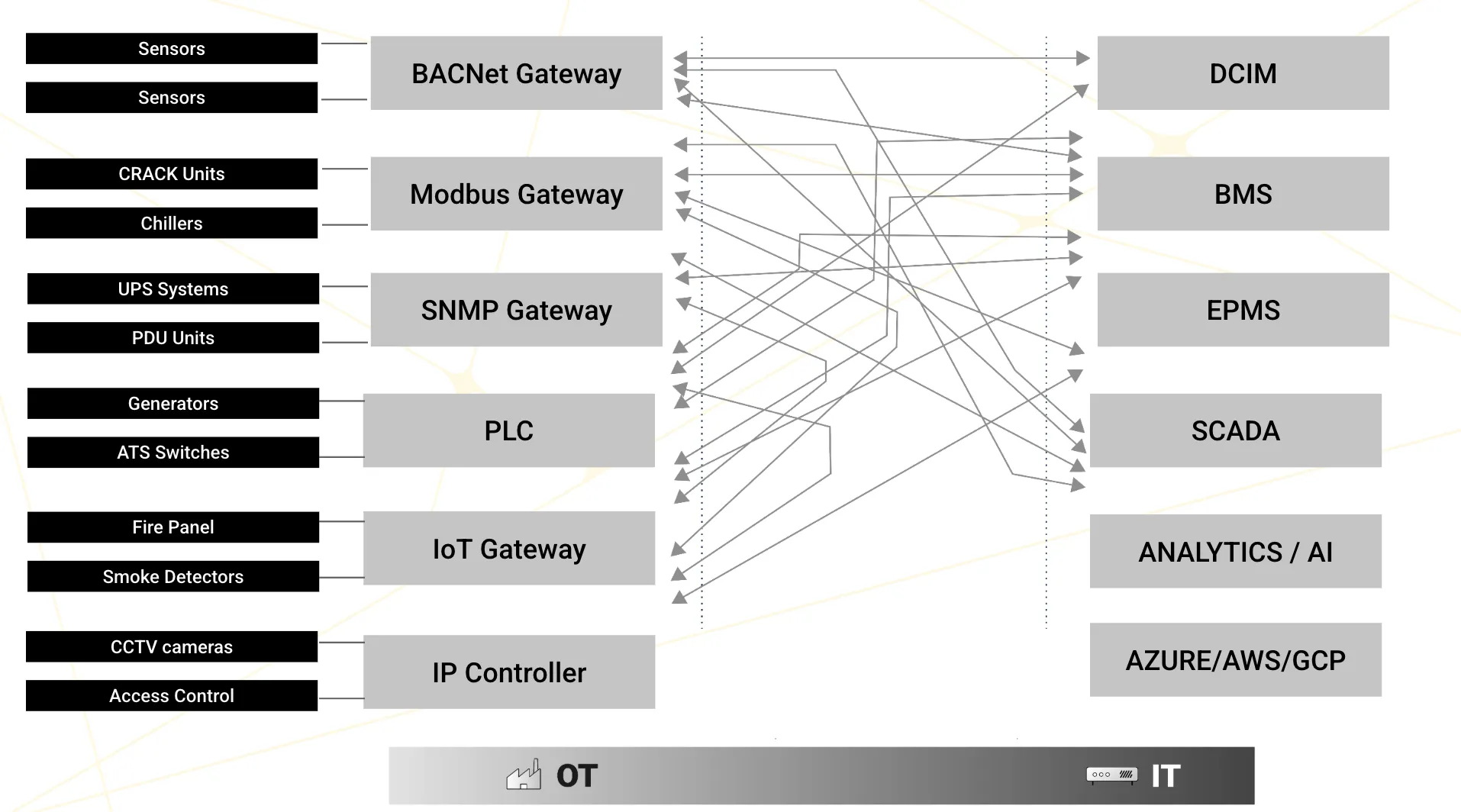

A modern data center generates telemetry from thousands of points across a wide range of systems, each using its own protocol and interface model. Environmental sensors may communicate over BACnet. Cooling equipment may rely on Modbus. UPS systems and PDUs often expose data through SNMP. Generators and switchgear may be integrated through industrial control systems. Fire and life-safety systems connect through dedicated gateways, while physical security systems operate through separate IP-based controllers. On the consuming side, that data must be delivered to an equally diverse set of applications, including DCIM platforms, building management systems, electrical power monitoring systems, SCADA environments, analytics platforms, and cloud services.

In most facilities, these producers and consumers have been connected through point-to-point integration. Each time a new data source needed to feed a new application, a custom interface was created to satisfy that immediate requirement. These integrations were typically hardcoded, tightly coupled, and designed around a single use case rather than a broader architectural model. Over time, as new systems were added and new reporting or control needs emerged, these one-off connections accumulated.

The result is a complex web of bespoke integrations in which gateways connect independently to multiple downstream platforms, while each platform pulls from multiple upstream systems through its own interfaces and data handling logic. What begins as a practical series of local decisions eventually becomes an architecture that is difficult to scale, difficult to modify, and increasingly incapable of supporting a unified, real-time view of operations.

The result is a complex web of bespoke integrations in which gateways connect independently to multiple downstream platforms, while each platform pulls from multiple upstream systems through its own interfaces and data handling logic. What begins as a practical series of local decisions eventually becomes an architecture that is difficult to scale, difficult to modify, and increasingly incapable of supporting a unified, real-time view of operations.

Data Is Siloed by Design

Point-to-point integration does not create a unified operational data layer. The building management system may contain cooling telemetry for the chiller plant, the electrical power monitoring system may hold power distribution data, SCADA may monitor generator and switchgear status, and the DCIM platform may attempt to assemble a broader view from whatever feeds have been made available to it. But no single system holds the complete, continuously updated picture required to understand how thermal constraints, electrical capacity, and workload placement interact in real time.

A digital twin depends on exactly that kind of unified view. It requires a coherent operational model that represents all relevant subsystems simultaneously and preserves the relationships between them. When the required data is fragmented across incompatible platforms, the effort needed simply to assemble the model can become as difficult as the twin initiative itself.

No Semantic Consistency

Fragmentation is not only a connectivity problem. It is also a meaning problem. Each platform defines assets, measurements, metadata, and timestamps in its own way. The same physical chiller may appear as “chiller1” in the building management system, “ch101” in the maintenance platform, and “CHLR-01-ZONE-A” in the DCIM environment. A temperature value may be recorded with different precision, units, or timestamp formats depending on where it originates and where it is consumed.

For human operators, these discrepancies create friction and ambiguity. For a digital twin, they create a major modeling burden. For agentic AI, they are even more restrictive. A software agent cannot reason reliably across subsystems if it cannot confidently determine that two data points refer to the same asset, the same condition, or the same moment in time. Without semantic consistency, machine-speed coordination across domains is not possible.

Why Polling Cannot Support Real-Time Control

Most legacy monitoring environments were built around periodic polling. Data is collected at fixed intervals measured in seconds or minutes and then made available for dashboards, alarms, and historical analysis. That model is sufficient for retrospective visibility, but it is fundamentally misaligned with the demands of real-time optimization.

In the environments described earlier, control decisions may need to be made in response to fast-changing thermal or electrical conditions. Precision cooling at the CDU level may require responses aligned to rapid GPU load transitions. Emerging power-quality issues may need to be detected before they develop into equipment stress or instability. In these cases, delayed sampling removes the opportunity to act at the right moment. If the data arrives after the physical condition has already changed, the intelligence layer is operating on a stale representation of reality. No amount of sophistication in the AI layer can overcome that limitation.

Fragile, Costly and Structurally Unscalable

Point-to-point architectures also become progressively more fragile and expensive as the environment grows. Every new integration is a custom dependency. Adding a single new data source for use by multiple downstream systems often requires multiple separate interfaces, each with its own mapping logic, error handling, and maintenance burden. Over time, the number of connections grows faster than the number of systems involved, creating an architecture that is increasingly difficult to understand, support, and change.

This creates a structural barrier to modernization. Any upgrade to a source system, gateway, or consuming platform risks breaking the interfaces around it. As a result, organizations become cautious about replacing or improving the very systems that are limiting them. For digital twin and agentic AI initiatives, this is a critical problem. Both depend on the continuous addition of new data sources, new models, and new consuming applications. An architecture that becomes more brittle each time it expands is not simply inefficient. It is fundamentally incompatible with the operating model these initiatives require.

How to Establish a Unified Data Layer

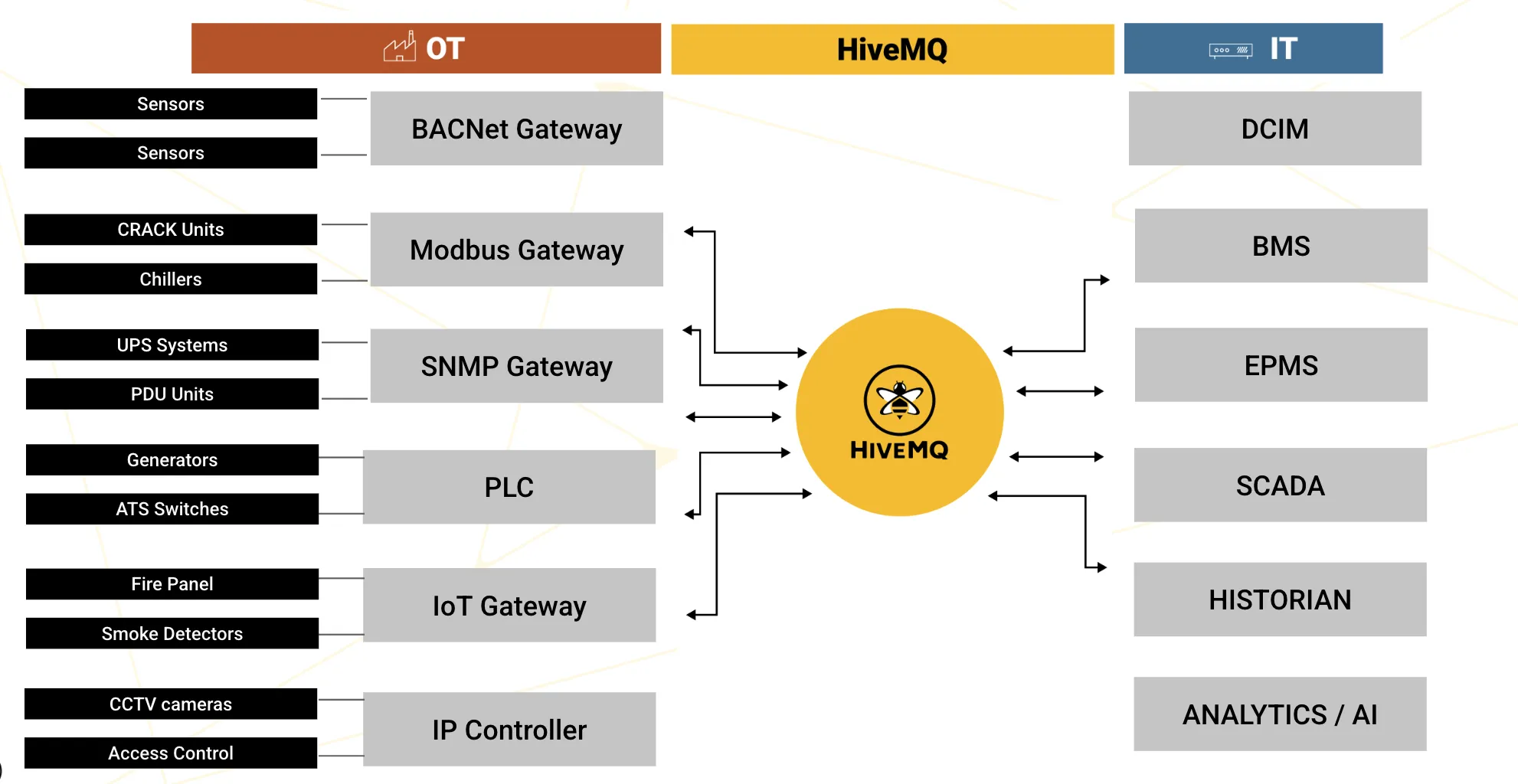

The architectural pattern that addresses these limitations replaces point-to-point integration with a centralized hub that decouples data producers from data consumers. Instead of every system building custom interfaces to every other system, each OT gateway, control system, or source platform connects once to a shared data layer, and each consuming application subscribes to the data it requires from that same layer. The result is a hub-and-spoke architecture that replaces a brittle mesh of bespoke connections with a far cleaner and more scalable operating model.

This shift is more than a simplification of integration. It provides the specific capabilities that digital twins and agentic AI depend on. Protocol translation and normalization can be handled once at the edge rather than reimplemented in every downstream connection. A unified namespace can provide consistent identity, structure, and context for every asset, signal, and event across the facility. Event-driven data movement replaces periodic polling, allowing information to flow at the moment it is generated rather than after a delay. Integration complexity scales linearly, because each new producer or consumer connects to the shared layer rather than to every other system individually. And because the architecture supports bidirectional data flow, it can serve not only monitoring and analytics, but also the closed-loop coordination and control required for autonomous optimization.

This shift is more than a simplification of integration. It provides the specific capabilities that digital twins and agentic AI depend on. Protocol translation and normalization can be handled once at the edge rather than reimplemented in every downstream connection. A unified namespace can provide consistent identity, structure, and context for every asset, signal, and event across the facility. Event-driven data movement replaces periodic polling, allowing information to flow at the moment it is generated rather than after a delay. Integration complexity scales linearly, because each new producer or consumer connects to the shared layer rather than to every other system individually. And because the architecture supports bidirectional data flow, it can serve not only monitoring and analytics, but also the closed-loop coordination and control required for autonomous optimization.

This is why the data movement layer is not simply a technical improvement. It is the enabling architecture for the operating model described throughout this content series. Without it, digital twins remain difficult to build and costly to maintain, and agentic AI remains constrained by fragmented, delayed, and semantically inconsistent data. With it, the foundation exists for unified visibility, real-time reasoning, simulation, and machine-speed action. The final post in this series outlines a practical path for putting that foundation in place.

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.