Industrial AI Use Cases and the Data Infrastructure That Powers Them

This article looks at the Industrial AI use cases manufacturers are actually deploying today, and at the three-layer data architecture that determines whether those use cases scale or stall.

The Industrial AI Use Cases That Matter

Five categories account for the majority of serious Industrial AI deployments today:

Predictive maintenance: Forecasting equipment failure from sensor data and history, with enough lead time to act before unplanned downtime occurs.

Visual quality inspection: Computer vision applied at the line for defect detection, either augmenting or replacing manual inspection.

Production planning and scheduling: AI-driven planners that respond to live constraints and disruptions, rather than running on assumptions that were valid a week ago.

Process optimization: Closed-loop tuning of operating parameters for yield, throughput, energy consumption or product quality.

Anomaly detection and root cause analysis: Surfacing outliers across the operation and tracing them back to actual origins, not just to the nearest symptom.

Each of these has succeeded in production. Each has also stalled in pilot. The dividing line, with very few exceptions, is the state of three underlying layers of data infrastructure.

What is the Data Infrastructure That Industrial AI Actually Needs?

Industrial AI is a data problem before it's a model problem. The three layers described below are distinct, sequential, and interdependent. Weakness in any one of them limits what's possible in the others.

1. The Semantic Layer: Does Your Operation Have a Shared Language for Its Own Data?

The semantic layer captures the entities that make up a manufacturing environment and the relationships between them. In a production system, those entities typically include physical assets (machines, lines, plants), inputs (raw materials, components, intermediate work-in-progress), outputs (finished goods, by-products), and people (operators, planners, supervisors with defined roles).

Each entity carries its own attributes, such as a machine's model, location, capacity, or cost rate. Each is connected to others through meaningful relationships. A machine belongs to a line. A line sits in a plant. An operator is qualified to run a particular cell. A component flows into an intermediate part on its way to a finished SKU.

Done well, this layer does not try to model everything. It models the right things at the right level of abstraction.

Three artifacts sit underneath it. A semantic model establishes shared vocabulary across the organization, so that "equipment," "completion date," or "customer" mean the same thing in production, finance, and engineering.

An ontology formalizes the rules and constraints around that vocabulary, defining what relationships are valid, what properties are required, and what reasoning is permissible. A semantic graph (also referred to as a knowledge graph) contains the actual entities and relationships in the operation, populated according to the ontology's rules. Each of these is a distinct piece of work, and conflating them is one of the most common causes of project drift. Read our whitepaper, Building Ontology-Driven Intelligence for Industrial AI Agents, to learn how ontology-driven AI agents use semantic models, knowledge graphs, and structured data to enable reliable, scalable agentic automation.

Whilst the theory seems fairly simple, the semantic layer is a bigger challenge than it appears on the surface because most of the knowledge it depends on is tribal. It lives in operators' heads, in the unwritten judgement of senior planners, in context that nobody documented because everyone assumed it was obvious. It does not sit cleanly in any system. It surfaces through interviews, time on the line and the steady accumulation of edge cases. The work of making this knowledge explicit, governed, and machine-readable, rather than locked in private memory, is what platforms like HiveMQ Pulse are designed to do.

2. The Real-Time Layer: Is Your Operation Capturing What's Happening Right Now?

Once the semantic layer captures what exists, the real-time layer captures what is happening.

Production orders are released. Materials are drawn from stock. Operators are assigned to workstations. A machine throws an alarm. A batch drifts off-spec. A delivery slips by a day. The state of the operation is changing every second, and every change carries downstream consequences.

This is where the second non-obvious property of manufacturing reveals itself: almost nothing is independent. A delay on one machine does not stay local; it rearranges what the planner does next, which orders take priority, how labor is reassigned, what arrives at the next workcenter, and how customer commit dates have to move. A bad recipe or a misconfigured parameter does not fail in one place. It propagates as second and third-order effects across an interconnected network of decisions.

This is why the real-time layer requires an event-driven foundation. Systems that treat events as isolated transactions, log it, store it, move on, break down precisely where Industrial AI needs them most. An Event-Driven Architecture (EDA) organized around a Unified Namespace (UNS) is designed to model state and interdependency, not just a sequence of timestamps. MQTT, with its publish/subscribe semantics and topic-based routing, has become the natural backbone for this layer. It is open, it scales, and the enterprise capabilities required to run it across multiple plants, including bridging, clustering, and fine-grained security, are mature in production deployments such as those built on the HiveMQ Broker.

3. The Cognitive Layer: Does Your Operation Know What "Good" Actually Looks Like?

Above the semantic and real-time layers sits a third layer that, in most companies, is almost completely invisible. At best, it is buried inside legacy software as hard-coded logic that no one on the current team can fully explain.

This is the cognitive layer. It governs intent. It defines the shape of what is possible. It encodes how trade-offs are made, what "good" actually looks like, how the operation should behave when things go sideways, and what outcomes the business is genuinely trying to optimize for.

The real-time layer describes individual events. The cognitive layer shapes behavior over time. Without it, every node in the operation makes a locally rational choice, and the system as a whole drifts off course.

This is where the value actually accumulates. Surfacing the rules, governing them, resolving their contradictions, and applying a real quality gate to changes routinely transforms how a company performs.

This is the layer where AI agents become genuinely useful, but only on a specific condition: that the agentic capability is built around trusted delegation, not open-ended automation. A domain expert states a goal in plain language. The agent operates within governed rules, on contextualized operational data, with traceable actions and human-checkable outcomes. AI is not the point. Trusted delegation is.

How Do These Layers Connect to Each Use Case?

Predictive maintenance looks like a sensor and ML problem on the surface. It only creates business value when an alert can be tied to a specific asset, correlated with what that asset is currently producing and the orders that depend on it (real-time), and routed into a maintenance decision that respects the live trade-off between unplanned downtime and planned intervention windows.

Visual quality inspection depends on the semantic layer to know what is being inspected and against which specification, on the real-time layer to associate defects with a specific batch, operator, and upstream process conditions, and on the cognitive layer to decide whether to rework, scrap, deviate or hold under the constraints currently in play.

Production planning is mostly cognitive layer work, but only because the other two are doing their jobs. A planner needs an accurate model of resources and routings (semantic), live state on order progress and disruptions (real-time), and an explicit articulation of the planning policy itself (cognitive), which today is most often hiding inside someone's spreadsheet.

Process optimization for yield, energy, or throughput requires all three working together: a model of the process, real-time data from it, and an unambiguous statement of what the optimization is actually trying to achieve and against which trade-offs.

Anomaly detection and root cause analysis is the use case where weakness in any layer becomes most visible. Without semantics, anomalies cannot be contextualized. Without real-time interdependency, causes get misattributed to whichever symptom is loudest. Without the cognitive layer, the analysis cannot tell you whether the anomaly even matters relative to what the operation is trying to do.

What Does This Mean for Your Industrial AI Program?

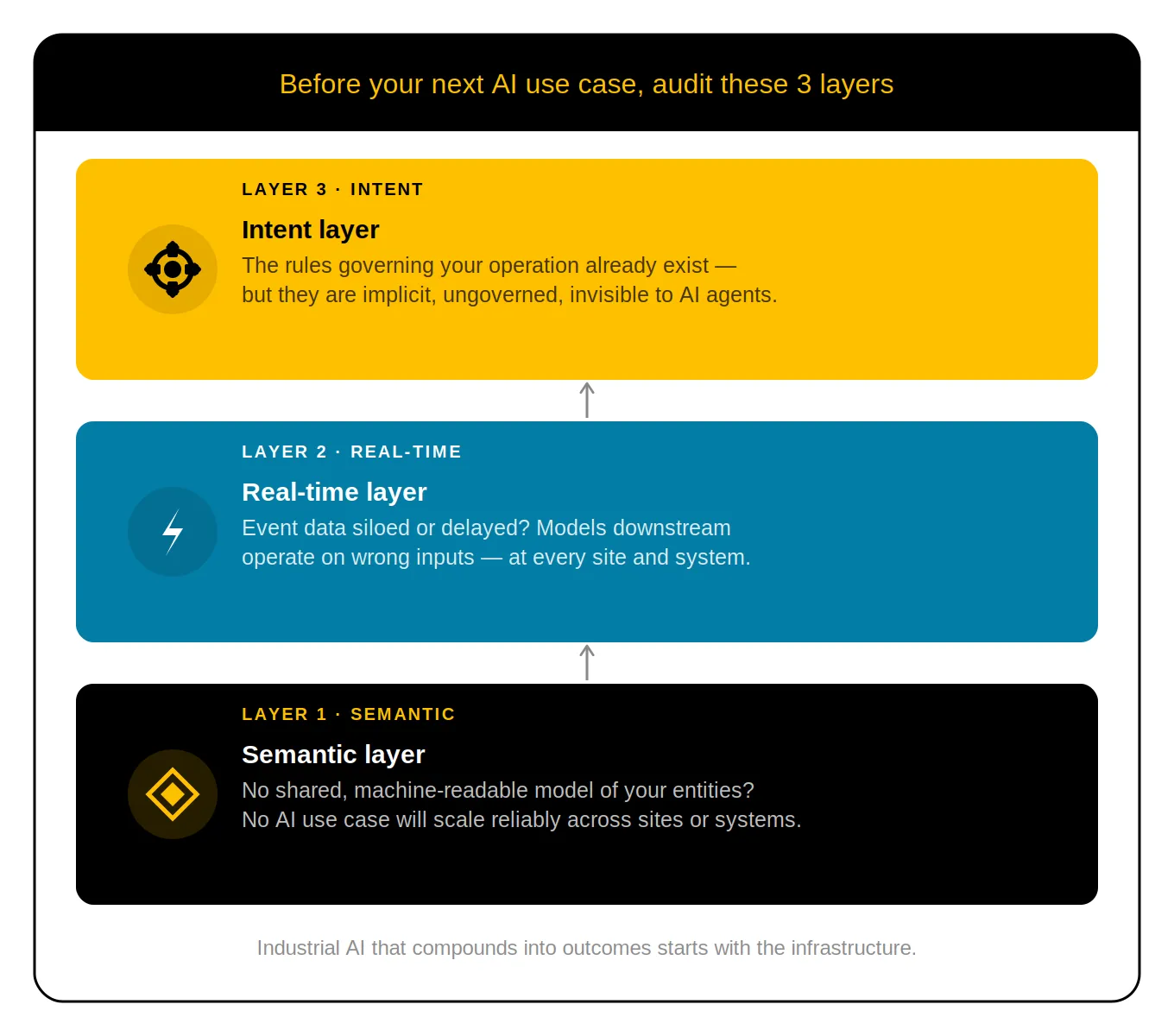

The practical implication is straightforward. Before investing in another AI use case, audit the three layers beneath it.

Start with the semantic layer. If your organization doesn't have a shared, machine-readable model of its own entities and relationships, no AI use case will scale reliably across sites or systems. HiveMQ Pulse is built to accelerate this work.

Then check the real-time layer. If your event data is siloed, delayed or disconnected from the context in the semantic layer, the models downstream will work on the wrong inputs. A MQTT-based Unified Namespace built on the HiveMQ Broker is the most scalable foundation for this layer.

Finally, surface the intent layer. The rules governing your operation are already there - they're just not explicit, not governed, and not accessible to AI systems that need to act on them.

Making them visible is where the most durable value accumulates.

Industrial AI that compounds into measurable outcomes doesn't start with the model. It starts with the infrastructure.

See how HiveMQ's platform connects, contextualizes and acts on real-time operational data, enabling your organization to activate your Industrial AI ambitions.

FAQ

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.