A Data Maturity Path to Intelligent Data Center Optimization

For operators responsible for live facilities, existing infrastructure and long-term contractual commitments, the question is not whether a unified data architecture is valuable, but how to adopt it without disrupting ongoing operations.

The answer is a structured maturity path: a progression of architectural layers in which each stage delivers immediate operational value while establishing the foundation for the next. We explore this maturity path in the final post of the ‘Optimizing Data Center Operations with Digital Twins and Agentic AI’ series.

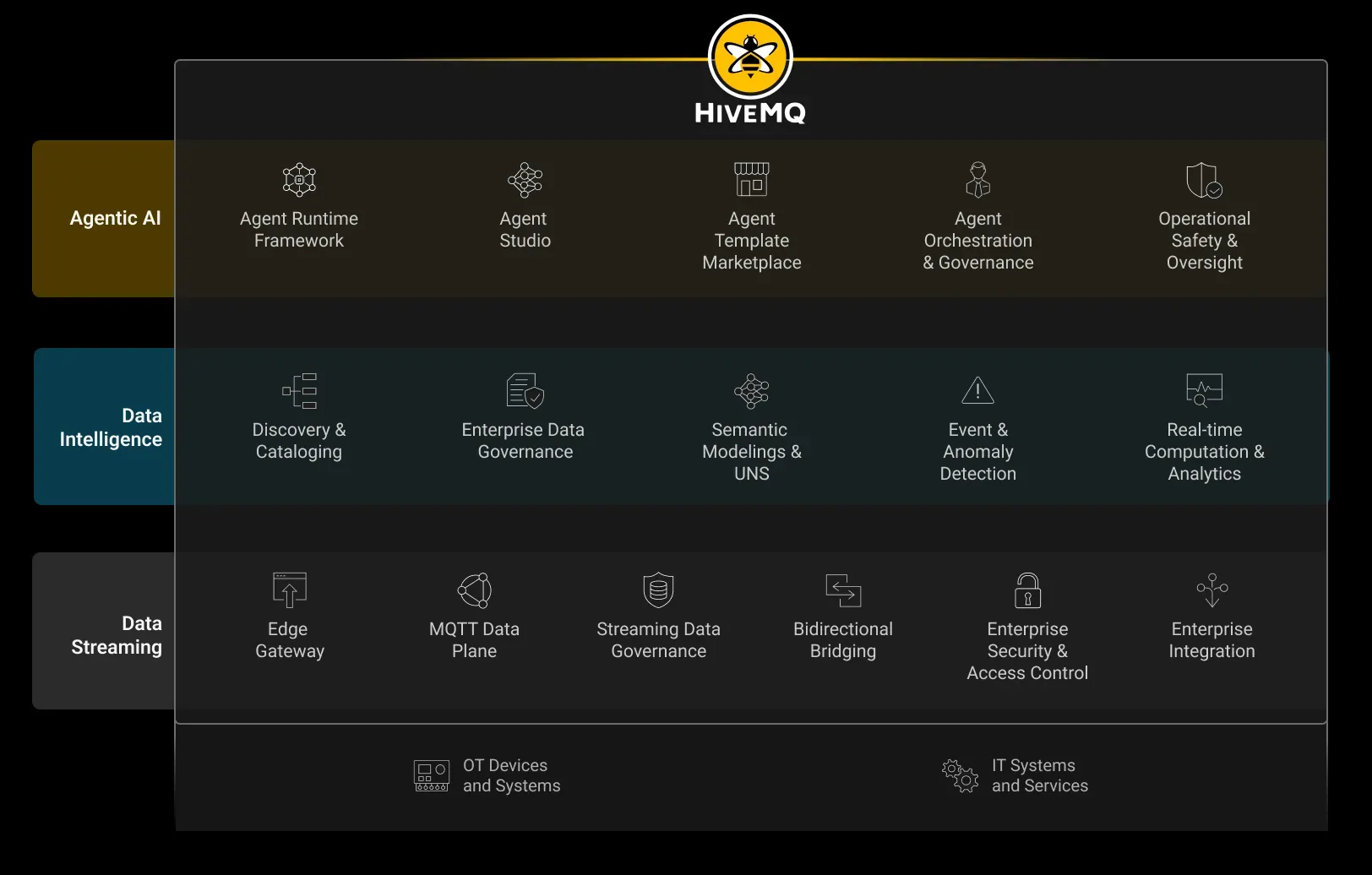

The first layer, Data Streaming, creates a real-time, event-driven foundation for operational data across the facility. The second, Data Intelligence, enriches and contextualizes that data so it can support higher-order capabilities such as simulation, digital twins, and machine reasoning. The third, Agentic AI Orchestration and Governance, provides the runtime framework, control mechanisms, and safety boundaries required for autonomous agents to operate in mission-critical environments.

Layer 1: Data Streaming - The Real-Time Foundation

Telemetry as a Core Operational Asset

The first architectural shift is to treat infrastructure telemetry not as a byproduct of individual systems, but as a core operational asset. Every signal, power draw, rack temperature, cooling performance, fault conditions, workload utilization, and equipment state must be made available through a shared data layer rather than remaining trapped inside isolated platforms. Each subsystem becomes a producer of real-time operational data, and each operator, application, analytics service, or AI agent consumes the subset of that data relevant to its role.

This is a fundamental change in how facility information is managed. Data is no longer gathered primarily for dashboards, alarms or after-the-fact reporting. It becomes an active layer of the operating model itself: continuously available, immediately usable, and shared across the full range of systems that depend on it.

The Unified Namespace and MQTT

The architectural pattern that enables this is the Unified Namespace (UNS): a centralized, structured data layer into which operational information is published once and from which all authorized consumers subscribe. Each source system connects to the namespace through a single integration point. Within that shared layer, data is organized with consistent structure, identity, and context, allowing it to be understood and reused across the facility without repeated remapping or duplication.

The transport layer for this model is MQTT, a lightweight publish-subscribe protocol designed for low-latency, event-driven data movement. MQTT allows the diverse systems inside the data center to communicate through a common messaging layer. Its decoupled architecture removes direct dependencies between producers and consumers. A sensor, controller or application publishes data to a defined topic, and any authorized consumer that requires that data subscribes to it. New data sources and new consuming applications can be added without requiring changes to existing integrations.

Instead of building and maintaining a growing web of custom system-to-system connections, the facility gains a single, scalable integration surface that supports change without introducing cascading complexity.

Immediate Operational Value

The Data Streaming layer delivers value well before a full digital twin or autonomous control capability is in place. With unified, real-time telemetry flowing through the namespace:

Cooling becomes adaptive. Rather than operating on fixed setpoints, cooling systems gain real-time visibility into thermal conditions across the entire hall and can adjust dynamically as workload heat maps shift, eliminating the energy waste of conservative, broad-spectrum cooling.

Power orchestration becomes dynamic. Instead of provisioning for peak demand at all times, operators can balance load in real time, manage redundancy margins actively, and identify opportunities to deploy stranded UPS energy during demand surges.

Compute scheduling becomes coordinated. Workload placement can factor in live power availability, cooling headroom, and network capacity simultaneously, allowing jobs to be paused, deferred, or reallocated to maximize performance per watt.

Cost visibility becomes granular. Time-stamped, per-asset telemetry enables metered billing based on actual resource consumption, GPU utilization, power draw, and thermal load, replacing flat-rate models with transparent, value-aligned cost structures.

Layer 2: Data Intelligence - From Raw Signals to Operational Knowledge

Why Streaming Alone Is Not Enough

The Data Streaming layer solves a foundational problem: connectivity. It ensures that data can move in real time from source systems to the applications, operators, and services that depend on it. But streaming alone does not create the structured, contextualized information that digital twins and agentic AI require.

Raw telemetry from operational systems is often incomplete in meaning even when it is available in real time. Asset names may differ across platforms. Metadata may be sparse or inconsistent. Timestamps may not be fully synchronized. Measurements may contain anomalies, gaps, or values that are technically possible in the data stream but operationally misleading in context. A chiller identified as “chiller1” in one system and “ch101” in another conveys little to a digital twin or AI agent unless those references are reconciled and linked to the same physical asset, operating zone, and dependency chain. Without that additional layer of meaning, a digital twin will model the environment unreliably, and an AI system will learn patterns in the data without understanding the operational reality behind them.

Semantic Enrichment and Ontology

The purpose of the Data Intelligence layer is to convert raw telemetry into information that is semantically consistent, contextually complete, and operationally meaningful. Each data point is enriched with the identity of the asset it belongs to, the subsystem it is part of, the location in which it operates, and the relationships that give it meaning within the broader facility. A temperature reading is no longer just a number from a sensor. It becomes a measurement from a specific device, mounted on a specific CDU, serving a defined rack row, within a known cooling zone, connected to an identifiable upstream cooling path.

This enrichment must be supported by a formal model of the facility and its relationships. That model takes the form of an ontology: a structured representation of assets, states, dependencies, and interactions, informed by domain expertise and often implemented through knowledge graphs or similar semantic frameworks. In the 5-megawatt data hall, the ontology can encode that Chiller-01 serves CDU-A through CDU-D, that those CDUs support Rack Rows 1 through 8, and that those rows depend on a particular UPS module and power bus. When a digital twin simulates a maintenance event or a change in operating conditions, those relationships allow downstream impacts to be traced automatically across cooling, power, and compute domains rather than inferred manually.

This enrichment must be supported by a formal model of the facility and its relationships. That model takes the form of an ontology: a structured representation of assets, states, dependencies, and interactions, informed by domain expertise and often implemented through knowledge graphs or similar semantic frameworks. In the 5-megawatt data hall, the ontology can encode that Chiller-01 serves CDU-A through CDU-D, that those CDUs support Rack Rows 1 through 8, and that those rows depend on a particular UPS module and power bus. When a digital twin simulates a maintenance event or a change in operating conditions, those relationships allow downstream impacts to be traced automatically across cooling, power, and compute domains rather than inferred manually.

External Context and Operational Variability

Simulation and AI reasoning depend not only on an accurate description of the facility, but also on an understanding of how the facility behaves under varying conditions. For that reason, the Data Intelligence layer must extend beyond static asset relationships and include the external and operational context that shapes system behavior over time.

This includes external drivers such as weather conditions that influence cooling demand, utility conditions that affect power availability or cost, and workload patterns that alter heat density and capacity pressure. It also includes internal sources of variability such as setpoint changes, maintenance windows, equipment degradation, changing load distributions, and the actual sensor responses associated with those events. Taken together, this context allows the data layer to capture not only the current state of the facility, but the range of behaviors the facility may exhibit under different operating conditions. That behavioral understanding is essential for high-fidelity simulation and for AI systems expected to reason across dynamic, interdependent environments.

Ensuring Simulation Integrity

A further responsibility of the Data Intelligence layer is to ensure that the data and models feeding simulation remain physically and operationally credible. A digital twin is only valuable if its outputs remain grounded in the real constraints of the facility. If the twin permits scenarios that violate equipment ratings, thermal limits, hydraulic behavior, or electrical constraints, then its recommendations may be misleading at best and dangerous at worst.

To prevent this, the Data Intelligence layer must embed engineering rules, asset limits, and operational constraints directly into the data model and simulation context. These rules can be derived from equipment specifications, control logic, domain expertise, and the ontology itself. Their purpose is to ensure that simulated states, inferred conditions, and synthetic training scenarios remain within the bounds of physical possibility and operational policy.

Layer 3: Agentic AI Orchestration and Governance

Once the Data Streaming and Data Intelligence layers are in place, the technical foundation exists for AI agents that can observe facility conditions, reason across the digital twin, and take action in response. But data center operations are among the highest-stakes environments for autonomous systems. Decisions related to thermal stability, power distribution, workload continuity, and infrastructure resilience can carry immediate operational consequences, and errors in one domain can propagate quickly into others.

For that reason, effective autonomy requires more than capable models or fast decision-making. It requires a structured operating framework that ensures autonomous behavior remains safe, bounded, predictable, and aligned with operational intent. In practice, this means agents must operate within an explicit runtime and governance model that controls how decisions are made, how actions are validated, and how authority is exercised.

The Agent Runtime Framework

At the center of this layer is an agent runtime framework: the environment in which agents are authorized to perceive, reason, coordinate, and act under defined constraints.

Defined authority boundaries

Each agent must operate within an explicitly defined scope. That scope includes the systems it is permitted to observe, the variables it may influence, the magnitude of acceptable changes, and the conditions under which control must revert to a human operator. For example, a chiller optimization agent may be permitted to adjust staging logic or operating setpoints within validated ranges, while any action that affects redundancy posture, maintenance status, or protection settings remains subject to human approval.

Multi-agent coordination

As multiple agents begin operating across cooling, power, and workload domains, coordination becomes essential. Actions that are locally valid within one subsystem may have unintended consequences elsewhere. The orchestration layer ensures that agents do not act in isolation. It evaluates dependencies, resolves conflicts, and enforces consistency across concurrent decisions so that one agent’s optimization does not undermine another agent’s objective or create instability at the system level.

Simulation-gated execution

Before autonomous actions are applied to the live facility, they can be tested against the digital twin. The projected effects of a proposed action are evaluated against operational constraints, reliability requirements, and service-level thresholds. If the action remains within validated bounds, it may proceed. If it introduces unacceptable risk, it is blocked, logged, and surfaced for review. This creates a control layer in which simulation is not only a planning tool, but also an active safeguard for live operations.

Full auditability

Every agent decision must be traceable. That includes the data the agent observed, the context it used, the reasoning path it followed, the action it selected, and the resulting outcome. This record is essential not only for regulatory or internal compliance, but also for incident analysis, operational transparency, and trust-building. Organizations do not expand autonomous authority because autonomy is available; they do so because its behavior has been demonstrated, understood, and validated over time.

Graceful degradation and human override

Human operators must remain able to pause, override, or revoke agent authority at any time. Just as importantly, the system must be designed to respond safely when an agent encounters conditions outside its validated operating envelope. In those situations, the correct behavior is not uncontrolled action or silent failure, but narrowing of authority, escalation to human teams, and orderly fallback to safer operating modes. In high-stakes environments, graceful degradation is a core requirement of trustworthy autonomy.

Conclusion

Digital twins and agentic AI offer a powerful new operating model for the data center, but they only become viable when supported by the right data foundation. Real-time streaming makes operational signals continuously available. Data intelligence gives those signals shared meaning, physical grounding, and simulation value. Agentic AI orchestration and governance turn that understanding into safe, auditable, autonomous action.

Together, these capabilities make it possible to move beyond conservative, reactive operations toward a more adaptive model, one that increases usable capacity, improves energy efficiency, accelerates diagnostics, extends asset life, and reduces the need for unnecessary capital expansion. In an environment where both compute demand and infrastructure constraints are intensifying, that shift is no longer optional. It is becoming a strategic requirement.

The organizations that build this foundation first will be best positioned to unlock more value from existing infrastructure, respond more intelligently to changing conditions, and operate with a level of speed and precision that legacy architectures cannot support. The intelligent, autonomous data center is not a distant concept. Its enabling technologies already exist. The defining question now is which operators will put the data architecture in place to realize it.

Kudzai Manditereza

Kudzai is a tech influencer and electronic engineer based in Germany. As a Senior Industrial Solutions Advocate at HiveMQ, he helps developers and architects adopt MQTT, Unified Namespace (UNS), IIoT solutions, and HiveMQ for their IIoT projects. Kudzai runs a popular YouTube channel focused on IIoT and Smart Manufacturing technologies and he has been recognized as one of the Top 100 global influencers talking about Industry 4.0 online.