Optimizing Data Center Operations with Digital Twins and Agentic AI

The New Operational Reality of the Modern Data Center

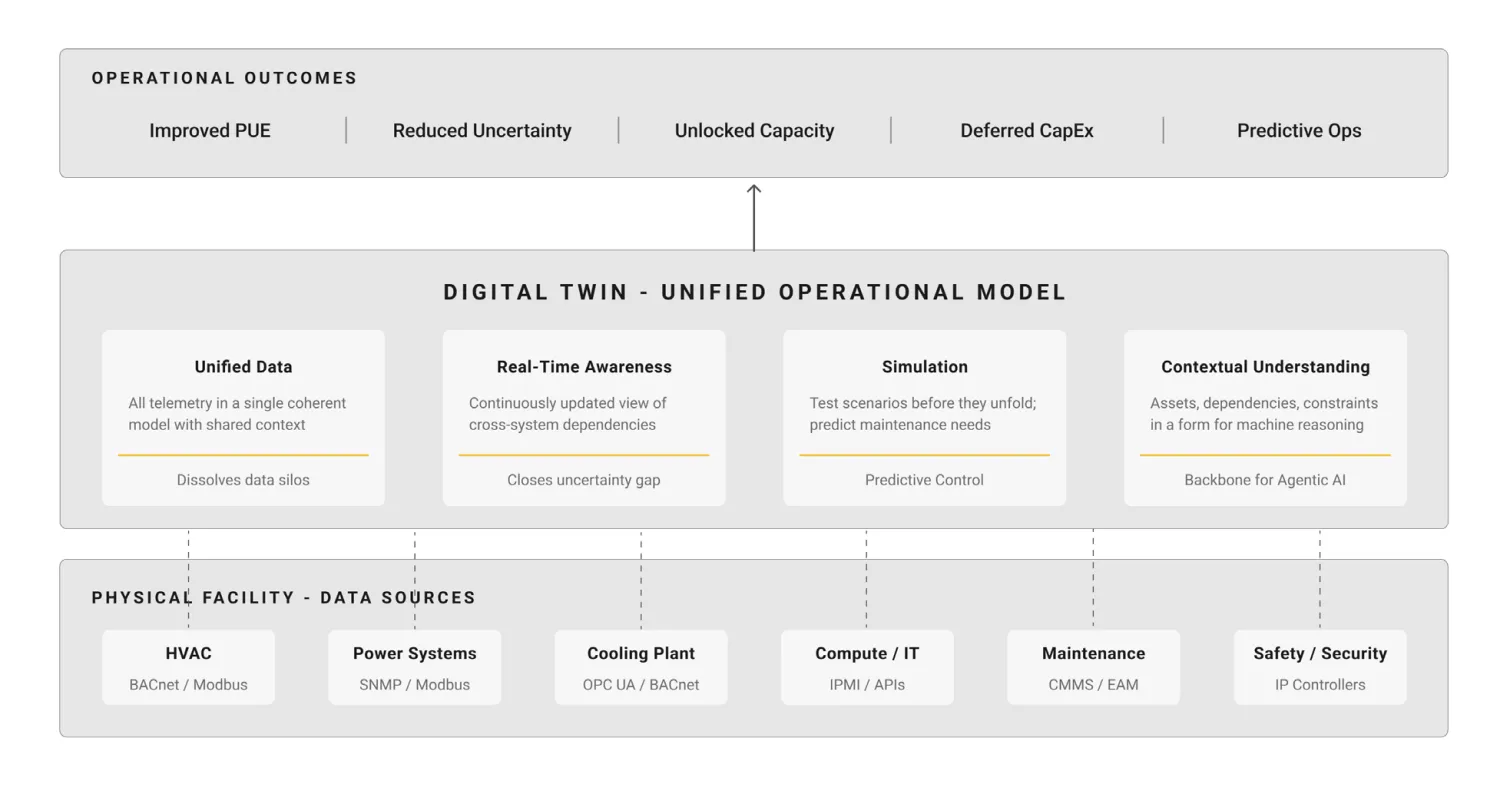

Data centers must now manage three persistent operational pressures: power availability, thermal management, and capacity utilization. These are no longer separate concerns. Constraints in one domain increasingly affect outcomes in the others.

AI workloads are increasing both the speed and severity of operational stress, making it more difficult to manage these systems within acceptable economic and operational limits.

Why Current Approaches Fall Short

Many facilities operate with:

Fragmented monitoring systems across power, cooling, compute, and network

Data collected at different intervals

Limited interoperability between systems

This results in operational awareness that is descriptive but not sufficiently coordinated for fast decision-making.

In AI-intensive environments, delayed feedback and static monitoring increase operational risk and reduce efficiency.

The Opportunity for Data-Driven Optimization

Data-driven optimization allows facilities to respond dynamically to real conditions. Instead of relying on static assumptions, operators can adjust cooling strategies based on live thermal loads, monitor equipment performance in context, and detect early signs of inefficiency or failure.

This approach improves energy efficiency, reliability, asset life, and capacity utilization. It also enables more accurate allocation of resources and better-informed expansion decisions.

What You’ll Learn from this Whitepaper

1. Why digital twins are foundational

A digital twin creates a continuously updated virtual representation of the physical environment. It enables a deeper understanding of cross-system dependencies, supports scenario simulation before operational changes, helps identify usable capacity, and reduces uncertainty in day-to-day operations.

2. How agentic AI enables coordinated optimization

Agentic AI builds on the digital twin to evaluate system conditions, recommend or execute adjustments, and optimize across efficiency, resilience, utilization, and performance. It operates within defined policies and governance boundaries to ensure controlled and reliable outcomes.

3. Why traditional data architectures limit progress

Most data center environments rely on point-to-point integrations, siloed data across proprietary systems, and polling-based data collection. These approaches limit real-time visibility, reduce coordination across systems, make scaling difficult, and prevent effective simulation and automation.

4. A three-layer data maturity model

The whitepaper introduces a structured approach:

Layer 1: Data Streaming

A real-time, event-driven data foundation built on MQTT and a Unified Namespace

Layer 2: Data Intelligence

Semantic metadata, ontologies, and knowledge graphs to create contextualized data

Layer 3: Agentic Industrial Operations

Execution frameworks, policy boundaries, and audit mechanisms for safe operation

Key Outcomes

By adopting this model, organizations can improve energy efficiency and thermal performance while increasing usable capacity within existing infrastructure. It also reduces uncertainty in operational decision-making, accelerates diagnostics and issue detection, and helps defer capital expenditure by improving overall utilization.

These outcomes result from coordinated optimization across power, cooling, and compute systems.

Why This Matters

Data center operations have become more complex, more dynamic, and more interconnected. The volume of telemetry now exceeds what can be continuously interpreted through human oversight alone.

Digital twins and agentic AI provide a way to manage this complexity through unified operational models, real-time reasoning, and coordinated action across systems.

Optimize data center operations for AI with digital twins and agentic AI. Learn how to improve efficiency, capacity, and real-time decision-making in this whitepaper. Download now!