What’s New in HiveMQ 4.15?

The HiveMQ team is proud to announce the release of HiveMQ Enterprise MQTT Platform 4.15. This release launches the closed beta of HiveMQ Data Governance Hub - Data Validation, adds security enhancements, and improves usability.

Highlights

- Closed beta release of the HiveMQ Data Governance Hub - Data Validation

- HiveMQ Enterprise Security Extension enhancements

HiveMQ Data Governance Hub - Data Validation

Today’s release launches a closed beta of the first feature of our new HiveMQ Data Governance Hub: Data Validation. The new product gives you the ability to set policies that specify how data is handled within the HiveMQ platform to maximize the value of your IoT data pipelines right from the data source.

How it works

In the HiveMQ Data Governance Hub, data validation implements a declarative policy that checks whether your data sources are sending data in the data format you expect. This process ensures that the value of the data is assessed as early as possible in the data supply chain. Checks occur before your data reaches downstream devices or upstream services where costly validation must be handled by each subscribing client.

The HiveMQ Data Governance Hub - Data Validation feature allows you to:

- Enforce policies along the entire MQTT topic tree structure

- Validate MQTT messages for JSON schema or Protobuf

- Reroute valid and invalid MQTT messages to different topics based on the result of the data validation

- Increase the observability of bad clients with additional metrics and log statements

The data validation feature also adds new Policies and Schemas endpoints to the HiveMQ REST API. For more information, see HiveMQ REST API.

Example policy definition JSON:

{

"id": "com.hivemq.policy.coordinates",

"matching": {

"topicFilter": "coordinates/+"

},

"validation": {

"validators": [

{

"type": "schema",

"arguments": {

"strategy": "ALL_OF",

"schemas": [

{

"schemaId": "gps_coordinates"

}

]

}

}

]

},

"onFailure": {

"pipeline": [

{

"id": "logFailure",

"functionId": "log",

"arguments": {

"level": "WARN",

"message": "$clientId sent invalid coordinates on topic '$topic' with result '$validationResult'"

}

}

]

}

}

The example policy ensures that MQTT messages published to the MQTT topic coordinates that do not match the gps_coordinates schema are not processed further and dropped.

A logging message is printed to help debug the misbehaving clients.

The referenced gps_coordinates schema specifies the range of numbers that the latitude and longitude fields can contain with a standard JSON schema definition.

The schema is uploaded to the HiveMQ Data Governance Hub and is enforced for all MQTT messages clients publish to the coordinates topic.

How it helps

The MQTT protocol is data agnostic. This means that your MQTT clients receive data regardless of whether the data is valid or not. Although engineering teams can implement validation logic for each service they build, the system-wide communication of such solutions is frequently complex and error-prone. Particularly when third-party data producers are in use, miscommunication can cause costly delays and long downtimes.

Our data validation feature lets your development teams use the HiveMQ Enterprise broker to automatically enforce a data validation strategy of their own design (including fine tuned-control over how the broker handles incoming valid and invalid MQTT messages).

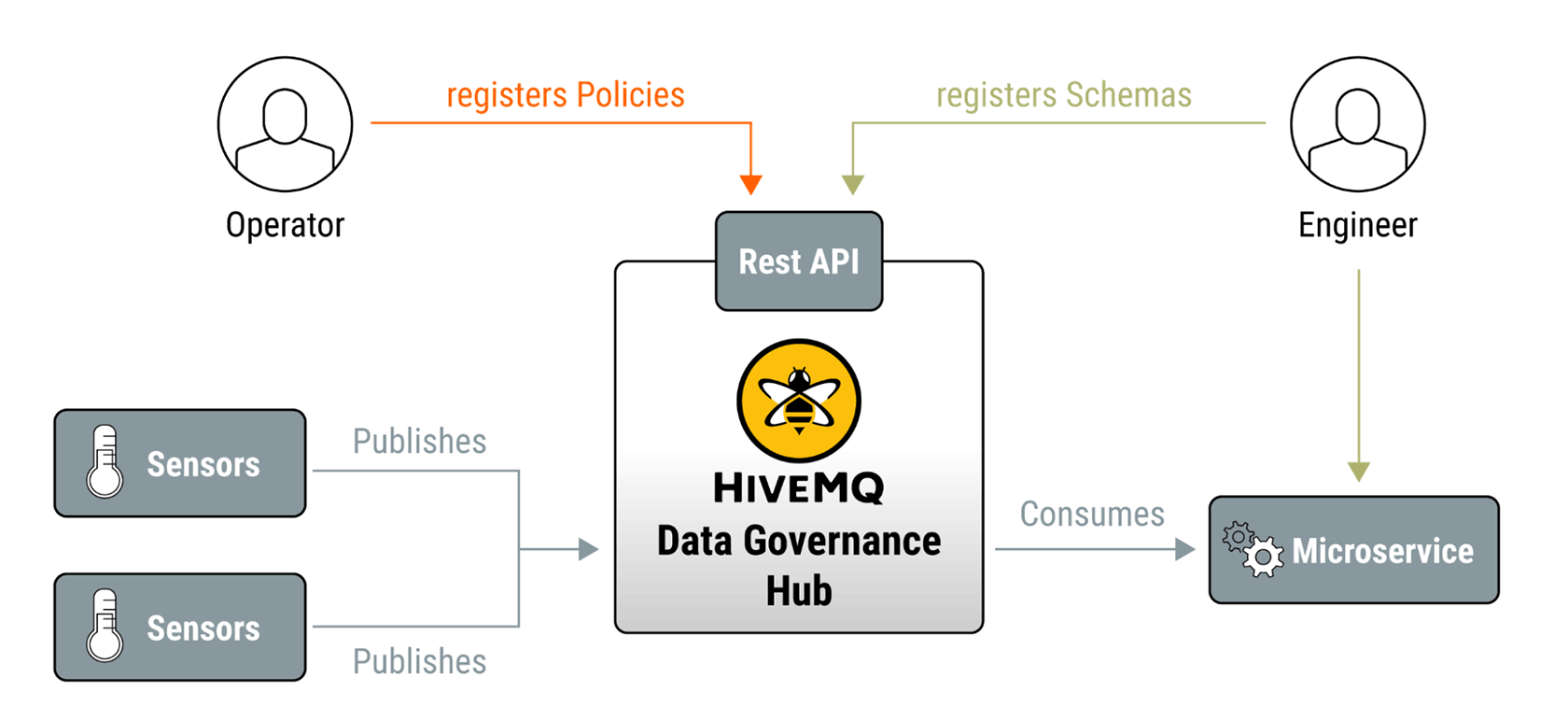

The following diagram illustrates a typical data validation workflow:

In this user journey, an engineering team develops a microservice that consumes data from specific MQTT topics. To ensure the reliability of the microservice, the engineers upload their accepted schema to the HiveMQ Data Governance Hub.

To enforce the schema validation, the operations team defines and registers an appropriate policy that tells HiveMQ how to handle incoming MQTT messages.

The result is an efficient microservice that only consumes MQTT messaging data in the expected format. This type of well-defined data validation can save time and resources:

- Enables parallel development

- Reduces the number of issues that reach production systems

- Simplifies the identification of invalid data sources

To get all the details on the first phase of our new HiveMQ Data Governance Hub or to request to join the closed beta, contact datagovernancehub@hivemq.com.

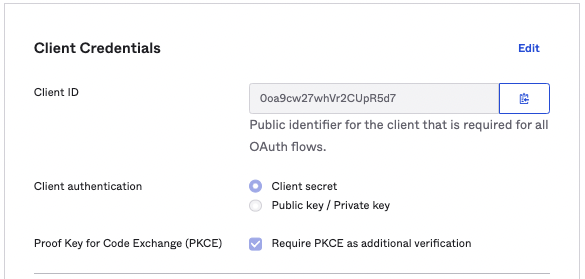

PKCE Support in the HiveMQ Enterprise Security Extension

The Enterprise Security Extension now supports PKCE (Proof Key for Code Exchange) for HiveMQ Control Center users that use OAuth2 for authentication. PKCE (RFC 7636) is an extension to the OAuth 2.0 protocol that increases security when requesting access tokens from identity management services such as Okta or Keycloak.

How it works

You can configure PKCE as an authorization code flow for the OAuth authentication manager in the control center pipeline of your Enterprise Security Extension.

<oauth-authentication-manager>

<realm>oauth-realm-name</realm>

<flow>authorization-code-pkce</flow>

...

Identity management services such as Okta provide matching configuration options:

How it helps

PKCE provides an additional level of protection against authorization code injection attacks for users of the HiveMQ Control Center when using OAuth2.

New Preprocessors in the HiveMQ Enterprise Security Extension

HiveMQ 4.15 adds two preprocessors to the HiveMQ Enterprise Security Extension. The new preprocessors allow you to load ESE variables into the HiveMQ Connection Attribute Store and Session Attribute Store. This new capability makes it possible to visualize ESE variables on the client view of your HiveMQ Control Center. It also allows custom extensions to access ESE variable information via the Connection Attribute and Session Attribute Store.

How it works

The HiveMQ Enterprise Security Extension provides preprocessors that help you customize the authentication and authorization of your MQTT clients. During processing, you can set different types of variables. These variables can now be stored in the Connection Attribute Store and Session Attribute Store.

<connection-attribute-preprocessor>

<from-ese-variable>string-1</from-ese-variable>

<to-connection-attribute>my-attribute</to-connection-attribute>

</connection-attribute-preprocessor>

How it helps

You can use the Session Attribute Preprocessor to store relevant authentication information in the session attributes of a client. This makes it possible, for example, to read, process, and store claims from a JWT token. The information remains visible for the duration of the client’s session in the client view of your HiveMQ Control Center. Additionally, you can now use session attribute information in your custom extensions for further processing.

Additional Features and Improvements

HiveMQ Enterprise MQTT Broker

- Streamlined the collection of subscribers to achieve a more efficient distribution of the publish workload to all responsible nodes of a cluster.

- Fixed an issue that could negatively impact performance when the client event history feature is in use.

- Fixed an issue that prevented broker startup when a domain name is used in the static cluster discovery.

- Added metrics to provide increased observability for tasks that trigger changes in the overload protection levels.

- Improved the way that system metrics are handled to provide increased granularity.

- Added listener type and port information to the HiveMQ event log entries that SSL errors and ungrateful disconnects generate.

- Increased feedback in overload protection log file statements to improve observability.

HiveMQ Enterprise Extensions

- Aligned the default configuration location of the HiveMQ Enterprise Extension for Kafka, HiveMQ Enterprise Security Extension, HiveMQ Enterprise Extension for Amazon Kinesis, HiveMQ Enterprise Distributed Tracing Extension, HiveMQ Enterprise Bridge Extension, and HiveMQ Enterprise Extension for Google Cloud Pub/Sub to

conf/config.xmlfor increased consistency and ease of use.

NOTE: The previously-used configuration file locations are still supported, but may be deprecated in future versions. - For more information, see New Location for HiveMQ EnterpriseExtension Configuration Files.

HiveMQ Enterprise Distributed Tracing Extension

- Updated span attribute names in the HiveMQ Distributed Tracing Extension to support new OpenTelemetry semantic conventions.

Get Started Today

To upgrade to HiveMQ 4.15 from a previous HiveMQ version, take a look at our HiveMQ Upgrade Guide. To learn more about all the features we offer, explore the HiveMQ User Guide.

HiveMQ Team

Team HiveMQ brings together deep expertise in MQTT, Industrial AI, IoT data streaming, UNS, and Industrial IoT protocols. Follow us for practical deployment guidance, best practices for building a secure, reliable data backbone, and insights into how we are shaping the future of connected industries.

Our mission is to transform industrial data into real-time intelligence, actionable insights, and measurable business outcomes.

Have questions or need support? Contact us. Our experts are ready to help.